Everyone wants AI agents that plan, reason, and magically solve problems. Then they plug in a random model and wonder why the agent forgets context, hallucinates, or burns money like it’s a hobby.

The uncomfortable truth: your agent is only as good as the LLM you choose—and how you use it.

How to Build an AI Agent (Step-by-Step Guide)

Choosing an LLM for agents is not about picking the “most powerful” model. It is about balancing:

- Reasoning ability

- Cost

- Latency

- Tool usage

- Reliability

This guide walks through how to actually choose an LLM for agent systems without sabotaging your own architecture.

What Does an LLM Do Inside an Agent?

An LLM acts as the cognitive engine of an AI agent.

It is responsible for:

- Understanding inputs

- Generating plans

- Reasoning through tasks

- Deciding actions

- Interacting with tools

In short: the LLM is the brain. Everything else is support infrastructure.

Why Choosing the Right LLM Matters

Pick the wrong model and you get:

- Inconsistent outputs

- Poor reasoning

- High costs

- Slow responses

- Broken workflows

Pick the right model and you get:

- Reliable decision-making

- Efficient execution

- Scalable systems

This choice directly impacts product quality and profitability.

Key Factors When Choosing an LLM for Agents

1. Reasoning Capability

Agents need models that can think, not just generate text.

Look for:

- Multi-step reasoning

- Logical consistency

- Ability to follow instructions

Why it matters:

Planning, tool usage, and task decomposition all depend on reasoning.

2. Context Window Size

The context window determines how much information the model can process.

Larger context allows:

- Long conversations

- Complex workflows

- Better memory handling

Trade-off:

Larger context often means higher cost.

3. Cost Efficiency

If your agent runs at scale, cost becomes critical.

Consider:

- Cost per token

- Frequency of calls

- Model size

Strategy:

Use smaller models for simple tasks and larger models only when needed.

4. Latency (Speed)

Slow agents frustrate users and break workflows.

Important for:

- Real-time applications

- Customer support

- Interactive systems

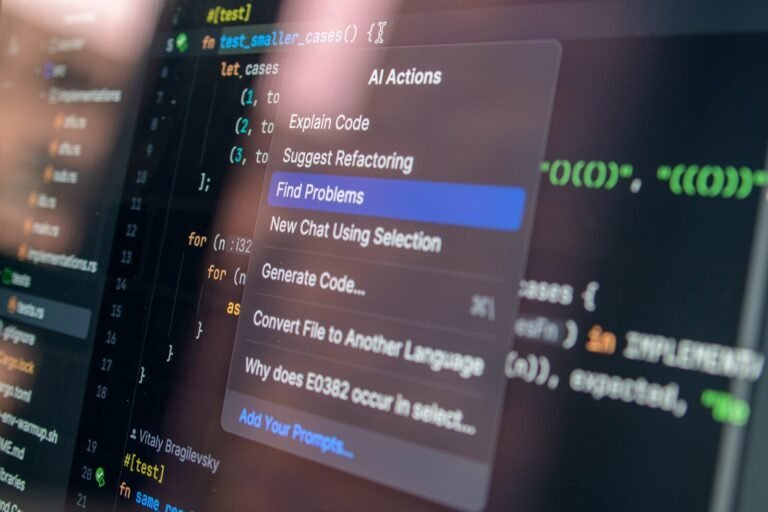

5. Tool Use and Function Calling

Modern agents rely on tools.

Required capabilities:

- API calling

- Structured outputs

- Function execution

6. Reliability and Stability

Some models are brilliant but unpredictable.

You need:

- Consistent outputs

- Low hallucination rates

- Deterministic behavior (when required)

7. Fine-Tuning and Customization

Customization allows better performance for specific tasks.

Options:

- Fine-tuning

- Prompt engineering

- Retrieval-augmented generation (RAG)

Types of LLMs for Agents

1. General-Purpose Models

Designed for a wide range of tasks.

Pros:

- Flexible

- Strong reasoning

Cons:

- Higher cost

2. Lightweight Models

Smaller and faster models.

Pros:

- Low cost

- Fast responses

Cons:

- Limited reasoning

3. Domain-Specific Models

Trained for specific industries.

Pros:

- High accuracy in niche tasks

Cons:

- Less flexible

4. Open-Source Models

Customizable and self-hosted.

Pros:

- Full control

- No API costs

Cons:

- Infrastructure complexity

LLM Selection Framework for Agents

Step 1: Define Use Case

Ask:

- What tasks will the agent perform?

- How complex are they?

Step 2: Evaluate Requirements

- Reasoning depth

- Speed

- Cost constraints

Step 3: Test Multiple Models

Never rely on benchmarks alone.

Test for:

- Accuracy

- Consistency

- Tool usage

Step 4: Optimize Architecture

Use hybrid approaches:

- Small model for simple tasks

- Large model for complex reasoning

Step 5: Monitor and Iterate

Continuously evaluate performance and costs.

LLMs in Agent Architectures

1. Single-LLM Agents

- One model handles everything

- Simple but limited

2. Multi-LLM Systems

- Different models for different roles

- More efficient and scalable

3. Hierarchical Models

- One model plans

- Others execute

LLM + Tools = Real Agents

LLMs alone are not enough.

Agents need:

- APIs

- Databases

- External tools

The LLM orchestrates these components.

Common Mistakes When Choosing LLMs

1. Choosing the Most Powerful Model

More power ≠ better system.

2. Ignoring Cost

Scaling costs can destroy profitability.

3. Overlooking Latency

Slow agents reduce usability.

4. No Testing

Benchmarks do not reflect real-world use.

Real-World Use Cases

1. Customer Support Agents

Need fast, reliable responses.

2. Research Agents

Require deep reasoning and large context.

3. Coding Agents

Need accuracy and logical consistency.

4. Automation Agents

Require strong tool integration.

Future of LLMs for Agents

- Better reasoning models

- Lower costs

- Faster inference

- Improved reliability

Agents will become more autonomous and capable.

Best Practices

- Use hybrid model strategies

- Optimize prompts

- Implement memory systems

- Monitor performance

- Balance cost and quality

Conclusion

Choosing the right LLM for agents is one of the most important decisions in AI system design.

It determines how well your agent thinks, acts, and scales.

A thoughtful selection process ensures better performance, lower costs, and more reliable systems.

FAQs

What is the best LLM for AI agents?

There is no single best model. The choice depends on your use case, cost constraints, and performance requirements.

Should I use multiple LLMs in an agent?

Yes, multi-LLM systems can improve efficiency and scalability.

How do I reduce LLM costs?

Use smaller models for simple tasks and optimize prompt usage.

Can open-source models be used for agents?

Yes, but they require more infrastructure and maintenance.

Why do LLMs hallucinate?

Hallucinations occur due to limitations in training data and probabilistic generation methods.