A comprehensive guide to the infrastructure stack powering AI agents, including orchestration layers, vector databases, inference systems, and scalable backend architectures.

AI agents are no longer simple chatbot layers sitting on top of language models. They are becoming full-scale distributed systems that require carefully designed infrastructure to operate reliably, efficiently, and at scale.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

Behind every production AI agent is a complex stack that includes:

- Model APIs

- Retrieval systems

- Orchestration frameworks

- Backend services

- Monitoring and observability tools

- Memory systems

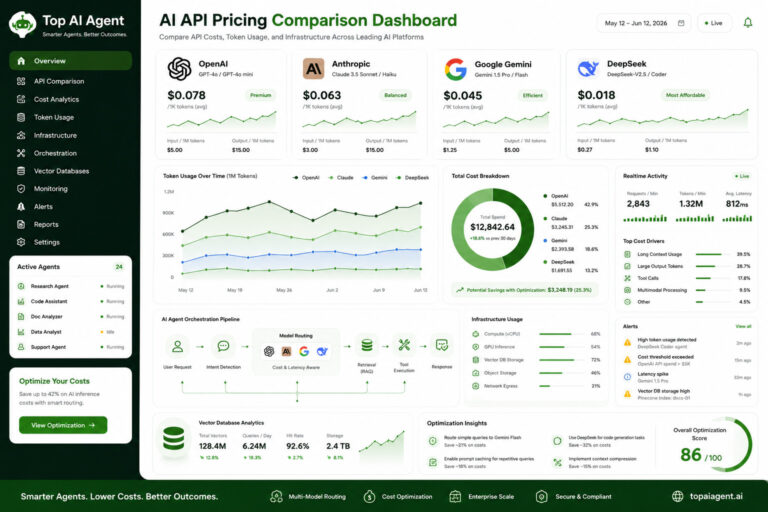

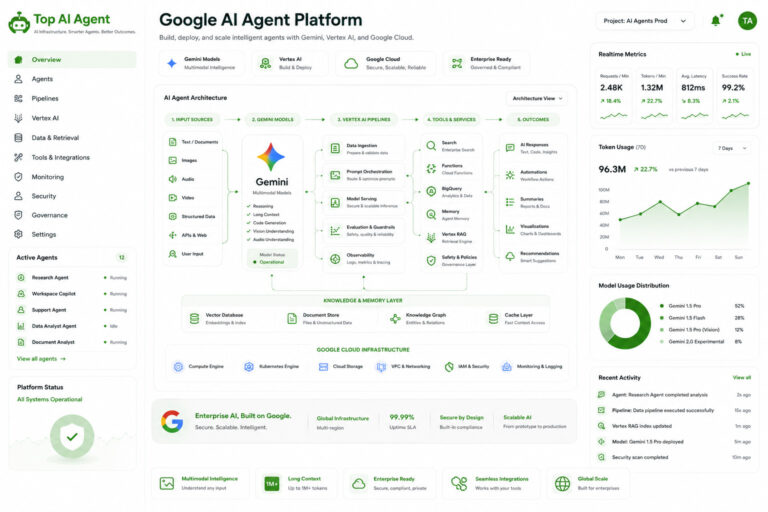

Companies building AI agents using platforms like OpenAI, Anthropic, Google, and DeepSeek are increasingly treating AI as an infrastructure problem—not just a model problem.

This guide breaks down the core components of AI infrastructure for agents, how they fit together, and what developers need to build production-grade systems.

AI Agent | Table of Contents

What Is AI Infrastructure for Agents?

AI infrastructure refers to the systems and technologies required to build, deploy, and operate AI agents in real-world environments.

Unlike standalone AI applications, agent systems must:

- Maintain memory

- Execute multi-step workflows

- Interact with external tools

- Handle concurrency

- Scale across users

- Remain observable and reliable

Core Idea

AI agents are:

Not just model calls

They are:

Distributed systems with reasoning layers

Core Components of AI Agent Infrastructure

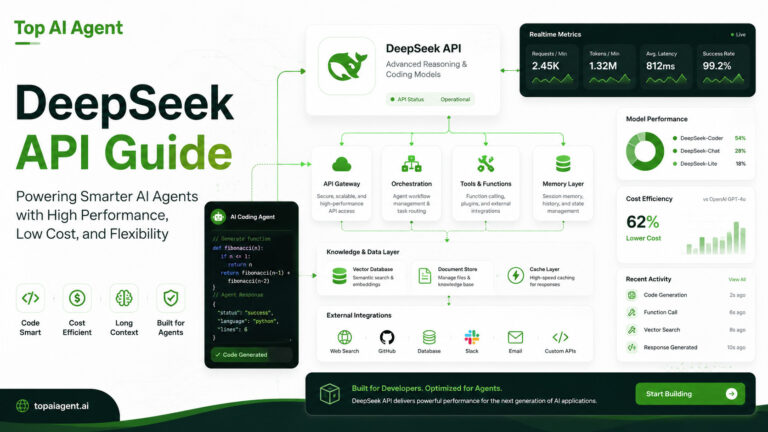

1. Model Layer (LLM APIs)

The model layer provides:

- reasoning

- language understanding

- decision-making

Common providers include:

- OpenAI

- Anthropic

- DeepSeek

2. Orchestration Layer

This is the “brain” that coordinates agent behavior.

Responsibilities

- Task planning

- Tool selection

- Workflow execution

- Multi-step reasoning

- State tracking

Examples of Tasks

| Task | Description |

|---|---|

| Plan | Break user request into steps |

| Execute | Call APIs or tools |

| Evaluate | Analyze results |

| Iterate | Continue workflow |

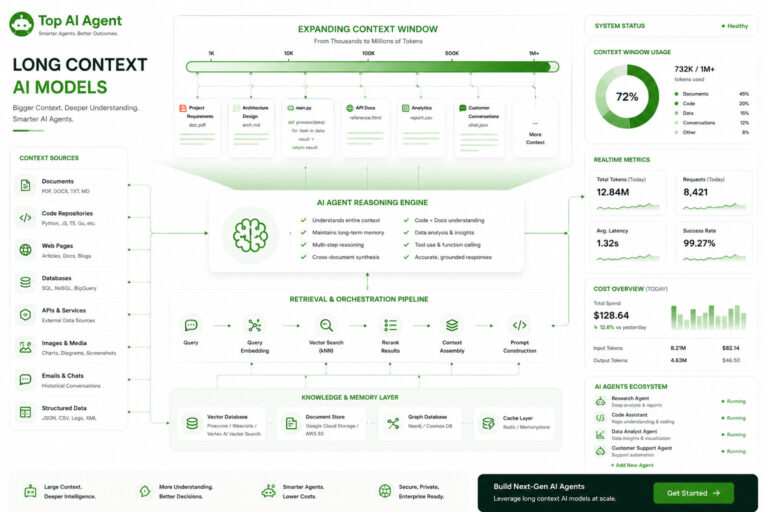

3. Vector Databases (Memory Layer)

Vector databases enable:

- semantic search

- retrieval-augmented generation (RAG)

- memory storage

What They Store

- embeddings

- documents

- conversation history

- structured knowledge

Why They Matter

Without retrieval systems, AI agents:

- lose context

- hallucinate more

- become inefficient

4. Backend Systems

AI agents require traditional backend infrastructure.

Components

- APIs

- databases

- authentication

- user sessions

- task queues

Responsibilities

| Function | Purpose |

|---|---|

| State management | Track agent progress |

| Session handling | Maintain conversations |

| Task scheduling | Coordinate workflows |

| Error handling | Recover from failures |

5. Tool Execution Layer

Agents must interact with external systems.

Examples

- Web APIs

- databases

- CRMs

- email systems

- internal tools

Function

The agent:

- decides what tool to use

- generates structured calls

- executes actions

- processes results

6. Monitoring & Observability

Production AI systems require visibility.

What to Monitor

- latency

- token usage

- error rates

- hallucination frequency

- workflow success rates

Why It Matters

AI agents are unpredictable without monitoring.

7. Inference Infrastructure

This layer handles model execution.

Includes

- GPU servers

- inference APIs

- batching systems

- caching layers

Key Considerations

- latency

- cost

- throughput

- scaling

Typical AI Agent Architecture

End-to-End Flow

| Step | Layer |

|---|---|

| User request | Frontend |

| Task planning | Orchestration |

| Retrieval | Vector database |

| Reasoning | Model API |

| Tool execution | External APIs |

| Response | Backend + UI |

Simplified Architecture Stack

| Layer | Role |

|---|---|

| Frontend | User interaction |

| Backend | State & logic |

| Orchestration | Workflow control |

| LLM | Reasoning |

| Retrieval | Memory |

| Tools | Actions |

| Monitoring | Observability |

Cloud vs Local AI Infrastructure

Cloud-Based Systems

Advantages

- Easy deployment

- scalable infrastructure

- access to frontier models

Challenges

- API costs

- vendor lock-in

- data privacy concerns

Self-Hosted Infrastructure

Advantages

- control over data

- lower long-term cost

- customization

Challenges

- GPU management

- scaling complexity

- maintenance overhead

Hybrid Approach

Most organizations use:

Cloud + local systems

Scaling AI Agent Infrastructure

Scaling introduces new challenges.

Key Scaling Problems

| Problem | Impact |

|---|---|

| Concurrency | Multiple agents running |

| Latency | Slower workflows |

| Cost | Increased API usage |

| Reliability | Failure handling |

| State management | Complex workflows |

Solutions

- distributed systems

- task queues

- load balancing

- model routing

- caching

AI Infrastructure for Multi-Agent Systems

Multi-agent systems involve:

- multiple agents collaborating

- shared memory

- task delegation

Infrastructure Needs

- coordination layer

- shared memory systems

- communication protocols

- conflict resolution

Retrieval Infrastructure (RAG)

Retrieval systems are critical.

Workflow

- User query

- Vector search

- Retrieve relevant data

- Inject into model

- Generate response

Benefits

- reduces hallucination

- lowers cost

- improves accuracy

AI Inference Optimization

Inference efficiency directly impacts:

- cost

- latency

- scalability

Techniques

- quantization

- batching

- caching

- speculative decoding

- model distillation

Common Infrastructure Mistakes

1. Over-reliance on the model

Ignoring:

- orchestration

- retrieval

- backend systems

2. No monitoring

Lack of visibility leads to:

- silent failures

- poor performance

3. Inefficient workflows

Unoptimized agents:

- loop unnecessarily

- waste tokens

4. Poor memory design

Sending full context repeatedly:

- increases cost

- slows systems

Best Practices for AI Infrastructure

Design for Modularity

Separate:

- model layer

- orchestration

- memory

- backend

Optimize Early

Focus on:

- cost

- latency

- scalability

Use Hybrid Architectures

Combine:

- cloud APIs

- self-hosted systems

Build Observability

Track:

- performance

- failures

- usage

Start Simple, Scale Gradually

Avoid over-engineering early.

The Future of AI Infrastructure

AI infrastructure is evolving rapidly.

Emerging Trends

- multi-agent systems

- real-time AI pipelines

- persistent memory systems

- edge AI deployment

- autonomous workflows

Key Shift

From:

Model-centric systems

To:

Infrastructure-centric systems

Final Thoughts

AI agents are fundamentally infrastructure-heavy systems.

The model is only one component. The real complexity lies in:

- orchestration

- memory

- backend systems

- retrieval

- monitoring

Teams that treat AI agents as distributed systems—not just prompt engineering problems—will build more reliable, scalable, and efficient products.

Understanding AI infrastructure is now a core skill for developers working with AI agents.

Key Takeaways

- AI agents require full infrastructure stacks, not just model APIs.

- Core components include orchestration, retrieval, backend systems, and monitoring.

- Vector databases are essential for memory and context.

- Cloud and hybrid architectures dominate modern deployments.

- Infrastructure design directly impacts cost, latency, and reliability.

- Multi-agent systems require additional coordination layers.

- Observability is critical for production AI systems.

- AI development is shifting toward infrastructure engineering.

FAQ

What is AI infrastructure for agents?

It refers to the systems required to build and run AI agents, including models, retrieval systems, orchestration, and backend services.

Why do AI agents need infrastructure?

Because they perform multi-step workflows, maintain memory, and interact with external systems.

What is the most important component?

Orchestration and retrieval systems are critical alongside the model.

Are vector databases necessary?

Yes, for memory, retrieval, and contextual reasoning.

What is RAG?

Retrieval-Augmented Generation, a method for injecting relevant data into prompts.

Can AI agents run without backend systems?

Not reliably. Backend systems are needed for state, workflows, and scalability.

Is cloud or local infrastructure better?

Most systems use a hybrid approach depending on cost, privacy, and scale.

What skills are needed for AI infrastructure?

Backend engineering, distributed systems, cloud architecture, and AI integration.