A practical guide to AI API latency, comparing response times across leading platforms and explaining how latency impacts AI agents, infrastructure design, and real-world performance.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

As AI agents move into production environments, latency has become one of the most critical performance metrics—often more important than raw model capability.

In simple terms, latency determines how fast an AI system responds. But in agent-based systems, latency compounds across multiple steps, making it a core factor in usability, cost, and scalability.

This guide compares latency across major AI APIs, including:

and explains how developers optimize latency in modern AI agent systems.

What Is AI API Latency?

Latency refers to the time it takes for an AI model to:

- Receive a request

- Process input (prompt + context)

- Generate a response

- Return the output

Types of Latency

| Type | Description |

|---|---|

| First token latency | Time before the model starts responding |

| Full response latency | Total time to complete output |

| Streaming latency | Perceived speed during response generation |

| End-to-end latency | Total time including infrastructure steps |

For AI agents, end-to-end latency is the most important metric.

Why Latency Matters for AI Agents

AI agents rarely perform a single inference.

A typical workflow might include:

- Planning

- Retrieval

- Tool execution

- Additional reasoning

- Final response

Each step adds latency.

Example

| Step | Approx Delay |

|---|---|

| Model reasoning | 1–3 seconds |

| Retrieval query | 200–500 ms |

| Tool execution | 500 ms – 2 seconds |

| Follow-up reasoning | 1–3 seconds |

Total:

3–8+ seconds per task

At scale, this becomes a major UX and performance issue.

Key Factors That Affect AI API Latency

1. Model Size

Larger models:

- Provide better reasoning

- Require more compute

- Increase latency

2. Context Length

Long-context prompts:

- Require more processing

- Increase attention computation

- Slow response times

3. Output Length

Longer responses take more time to generate.

4. Infrastructure Location

Latency increases with:

- geographic distance

- network hops

- cloud region mismatch

5. Concurrent Requests

High system load:

- increases queue time

- slows responses

6. Retrieval Pipelines

RAG workflows add:

- vector search latency

- database queries

- ranking steps

AI API Latency Comparison

General Latency Trends

| Provider | Typical Latency Profile | Strength | Tradeoff |

|---|---|---|---|

| OpenAI | Medium | Balanced performance | Can slow with long context |

| Claude (Anthropic) | Medium to High | Long-context reasoning | Slower large prompts |

| Google Gemini | Variable | Cloud optimization | Depends on infrastructure |

| DeepSeek | Lower to Medium | Efficient inference | Varies by deployment |

OpenAI Latency

OpenAI APIs are widely used for:

- AI agents

- copilots

- automation workflows

Latency Characteristics

- Fast first-token response (with streaming)

- Moderate full-response latency

- Slower with large context windows

- Optimized for real-time interactions

Best For

- Interactive agents

- coding copilots

- real-time assistants

Anthropic Claude Latency

Anthropic models are optimized for:

- long-context reasoning

- document-heavy workflows

Latency Characteristics

- Slower first-token response

- Higher latency for large documents

- Strong consistency despite longer processing time

Best For

- research agents

- enterprise workflows

- document analysis

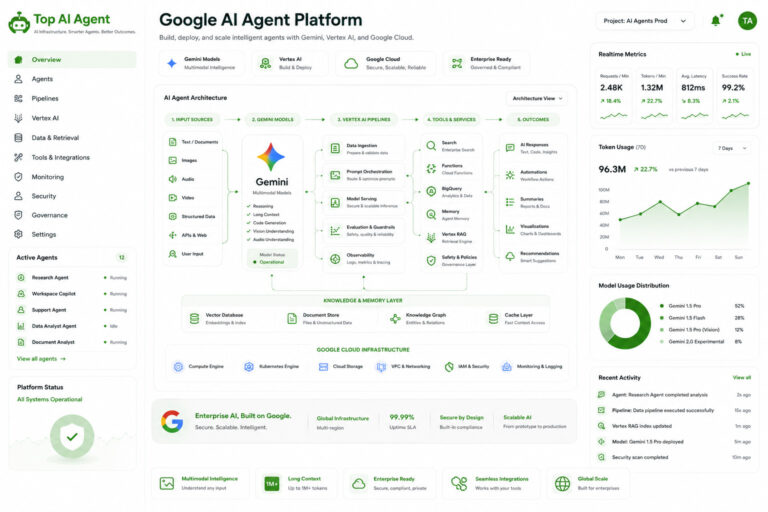

Google Gemini Latency

Google offers:

- cloud-integrated AI systems

- multimodal processing

Latency Characteristics

- Highly variable depending on infrastructure

- Faster within Google Cloud environments

- Optimized for large-scale deployments

Best For

- enterprise AI systems

- cloud-native applications

- multimodal workflows

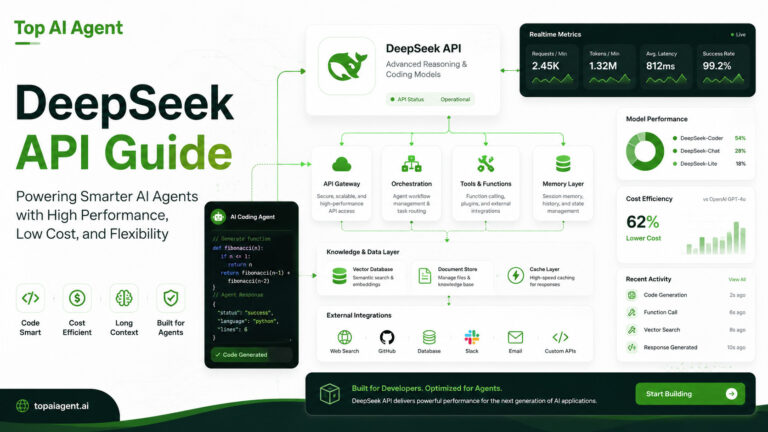

DeepSeek Latency

DeepSeek is often evaluated for:

- cost-efficient inference

- coding workflows

Latency Characteristics

- Generally lower latency for smaller models

- Efficient for coding tasks

- Performance varies by hosting provider

Best For

- background agents

- batch processing

- coding automation

Latency vs Cost Tradeoff

Latency and cost are closely related.

Tradeoff Table

| Optimization Goal | Impact |

|---|---|

| Lower latency | Higher compute cost |

| Lower cost | Higher latency |

| Long context | Slower + more expensive |

| Smaller models | Faster + cheaper |

Developers must balance:

- performance

- cost

- user experience

Real-World Latency in AI Agent Systems

Multi-Step Agent Workflow

| Step | Latency Contribution |

|---|---|

| Planning | 1–2 seconds |

| Retrieval | 200–500 ms |

| Tool execution | 500 ms – 2 seconds |

| Follow-up reasoning | 1–3 seconds |

Total System Latency

Even with fast models:

AI agents often operate in 3–10 second ranges

This is why optimization is critical.

How Developers Reduce Latency

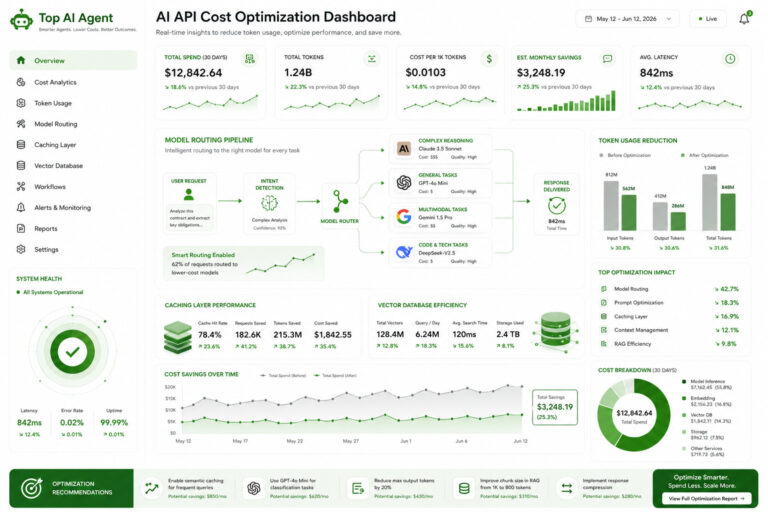

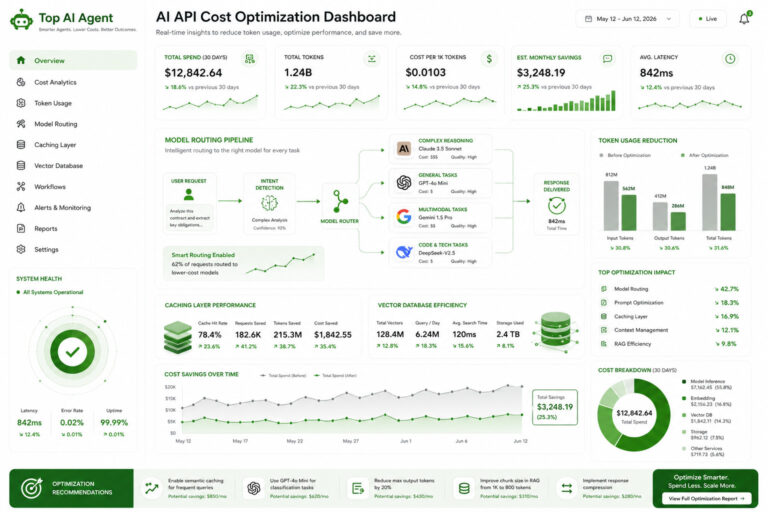

1. Model Routing

Use:

- small models for simple tasks

- large models for complex reasoning

2. Streaming Responses

Improves perceived latency by:

- showing output instantly

- reducing wait time

3. Retrieval Optimization

Optimize:

- vector search speed

- indexing

- query efficiency

4. Caching

Store:

- frequent queries

- common outputs

5. Parallel Execution

Run:

- multiple agent steps simultaneously

6. Context Reduction

Smaller prompts = faster inference

7. Edge Deployment

Reduce distance between:

- user

- infrastructure

Latency vs Long Context Models

Long-context models introduce major latency tradeoffs.

Impact of Large Context

| Context Size | Latency Impact |

|---|---|

| Small prompts | Fast |

| Medium prompts | Moderate |

| Large prompts | Slow |

| Massive context (100K+) | Significantly slower |

Best Practice

Combine:

- retrieval (RAG)

- context compression

instead of sending full datasets every time.

Cloud vs Local Latency

Cloud APIs

Pros

- Easy scaling

- managed infrastructure

Cons

- network latency

- dependency on provider

Local / Self-Hosted

Pros

- lower network latency

- more control

Cons

- hardware limitations

- setup complexity

When Latency Matters Most

Latency is critical for:

- real-time chat agents

- voice assistants

- live copilots

- interactive tools

Less critical for:

- batch processing

- offline analysis

- background automation

The Future of AI Latency

AI latency is improving rapidly.

Trends include:

- smaller optimized models

- better inference hardware

- speculative decoding

- distributed inference

- edge AI deployment

The goal is:

near real-time autonomous agents

Final Thoughts

Latency is no longer just a technical detail—it’s a core product decision.

As AI agents become more complex, latency compounds across workflows, making performance optimization essential.

The best systems are not just:

- powerful

but: - efficient

- responsive

- well-architected

Developers who understand latency tradeoffs will build faster, more scalable, and more usable AI systems.

Key Takeaways

- Latency measures how fast AI APIs respond to requests.

- AI agents compound latency across multiple steps.

- OpenAI offers balanced latency, Claude trades speed for context, Gemini varies by infrastructure, and DeepSeek focuses on efficiency.

- Long context significantly increases latency.

- Optimization strategies include routing, caching, streaming, and retrieval systems.

- Real-world AI agents often operate in 3–10 second response cycles.

- Infrastructure design is as important as model choice.

- Low latency is critical for real-time AI applications.

FAQ

What is AI API latency?

It is the time it takes for an AI model to process input and return a response.

Which AI API has the lowest latency?

DeepSeek and smaller models often provide lower latency, but performance depends on deployment and workload.

Why are AI agents slower than chatbots?

Agents perform multiple steps like planning, retrieval, and tool execution, which increases total latency.

How can latency be reduced?

Using smaller models, caching, streaming, retrieval optimization, and parallel execution.

Does long context increase latency?

Yes. Larger prompts require more processing time.

What is first-token latency?

It is the time before the model starts generating output.

Is cloud or local AI faster?

Local AI can reduce network latency, but cloud systems may offer better optimized inference.

What is acceptable AI latency?

For real-time applications, 1–3 seconds is ideal. For complex agents, 3–10 seconds is common.