A practical guide to building AI agents with the OpenAI API, including function calling, Assistants, realtime workflows, pricing considerations, and infrastructure best practices.

AI agents are becoming one of the most important application layers in modern software. From autonomous research assistants to workflow automation systems and coding copilots, developers increasingly rely on APIs that can reason, retrieve information, call tools, and manage multi-step tasks.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

Among the available platforms, the OpenAI API remains one of the most widely adopted choices for AI agent development.

Its ecosystem combines large language models, tool calling, structured outputs, multimodal processing, and developer tooling that simplify the process of building autonomous AI systems.

This guide explains how the OpenAI API works for AI agents, its core features, pricing considerations, strengths, limitations, and practical implementation strategies.

AI Agent | Table of Contents

What Is the OpenAI API for Agents?

OpenAI provides APIs that allow developers to integrate advanced AI models into applications, workflows, and autonomous systems.

For AI agents, the OpenAI API is commonly used to power:

- Conversational assistants

- AI copilots

- Coding agents

- Research agents

- Browser automation systems

- Enterprise knowledge assistants

- Multi-step workflow orchestration

Unlike traditional chatbots, AI agents can:

- Plan tasks

- Use tools

- Retrieve external information

- Maintain memory

- Execute workflows

- Interact with APIs and software systems

The OpenAI platform includes several capabilities specifically designed for these use cases.

Core OpenAI API Features for AI Agents

Function Calling

Function calling allows models to invoke external tools and APIs.

This capability is central to modern AI agents because it enables systems to:

- Query databases

- Search the web

- Trigger workflows

- Send emails

- Execute code

- Access enterprise systems

Instead of generating only text, the model can return structured instructions that applications execute programmatically.

AI Agents in Gaming: The 2026 Guide to Intelligent Gameplay and Game Development

Example Agent Workflow

| Step | Action |

|---|---|

| User request | “Schedule a meeting next week” |

| AI reasoning | Determines calendar access is needed |

| Function call | Invokes calendar API |

| External execution | Meeting is created |

| AI response | Confirms scheduling details |

Function calling is one of the key reasons developers use OpenAI for production-grade agents.

Structured Outputs

Structured outputs improve reliability for automation workflows.

Developers can enforce JSON schemas or structured response formats, making it easier to:

- Validate outputs

- Reduce parsing errors

- Connect downstream systems

- Improve deterministic workflows

This is especially useful for:

- CRM automation

- Enterprise agents

- Data extraction

- Workflow orchestration

- AI middleware systems

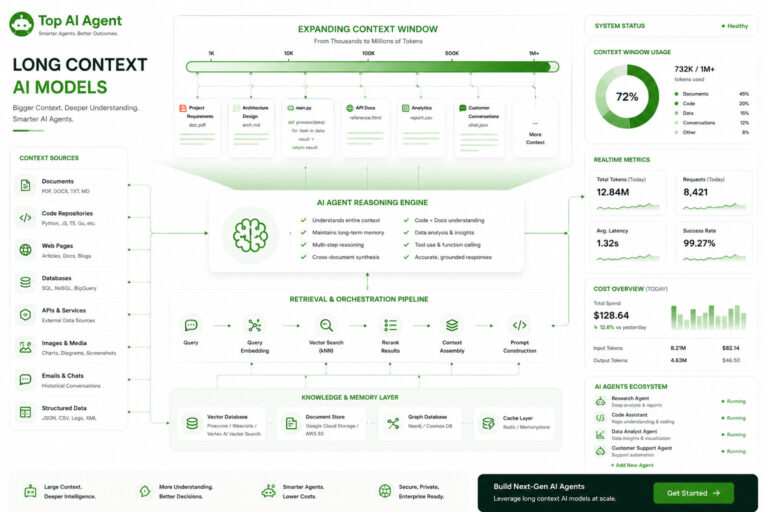

Long Context Windows

Modern AI agents often need to process:

- Large documents

- Knowledge bases

- Source code repositories

- Multi-turn conversations

- Persistent workflow histories

OpenAI models support extended context handling that enables:

- Retrieval-augmented generation (RAG)

- Long-form reasoning

- Document analysis

- Multi-step planning

However, developers still commonly combine long-context models with vector retrieval systems to improve efficiency and reduce token costs.

Multimodal Processing

The OpenAI API supports multimodal inputs including:

- Text

- Images

- Audio

- Documents

This expands AI agent capabilities into workflows such as:

- Visual document analysis

- Screenshot interpretation

- Voice assistants

- OCR pipelines

- Multimodal customer support systems

Multimodal support is becoming increasingly important for enterprise automation.

Realtime APIs

Realtime APIs are designed for:

- Voice agents

- Streaming interactions

- Live copilots

- Interactive assistants

These APIs reduce latency and improve responsiveness during ongoing sessions.

Realtime interaction is particularly useful for:

- Customer support agents

- AI meeting assistants

- Interactive coding systems

- Live workflow automation

OpenAI Models Commonly Used for Agents

Different OpenAI models are optimized for different workloads.

Common Agent Use Cases

| Use Case | Typical Model Preference |

|---|---|

| General reasoning | GPT-series flagship models |

| Fast orchestration | Smaller optimized models |

| Coding agents | Code-focused reasoning models |

| Multimodal agents | Vision-enabled models |

| Low-latency systems | Lightweight inference models |

Many production systems use multiple models together rather than relying on a single model for every task.

This approach is known as model routing.

OpenAI Assistants API

The Assistants API simplifies AI agent development by handling:

- Conversation state

- Tool management

- Retrieval workflows

- Persistent threads

- File interactions

Instead of building every orchestration layer manually, developers can use the Assistants API as a managed agent framework.

Benefits

- Faster development

- Reduced backend complexity

- Easier memory handling

- Native tool integration

Limitations

- Less architectural flexibility

- Platform dependency

- Potential scaling constraints for advanced custom systems

Some startups eventually migrate toward custom orchestration frameworks as products mature.

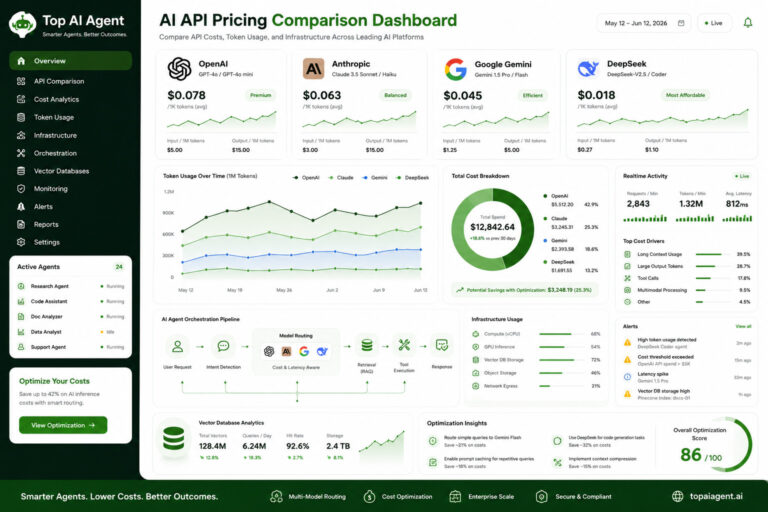

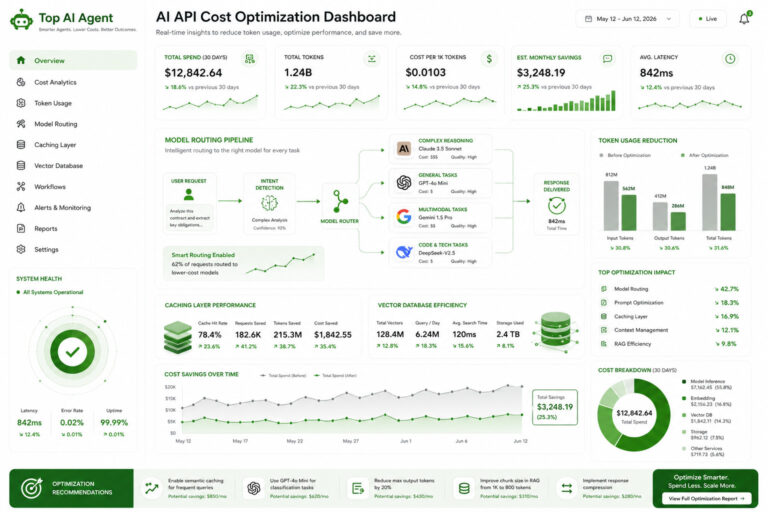

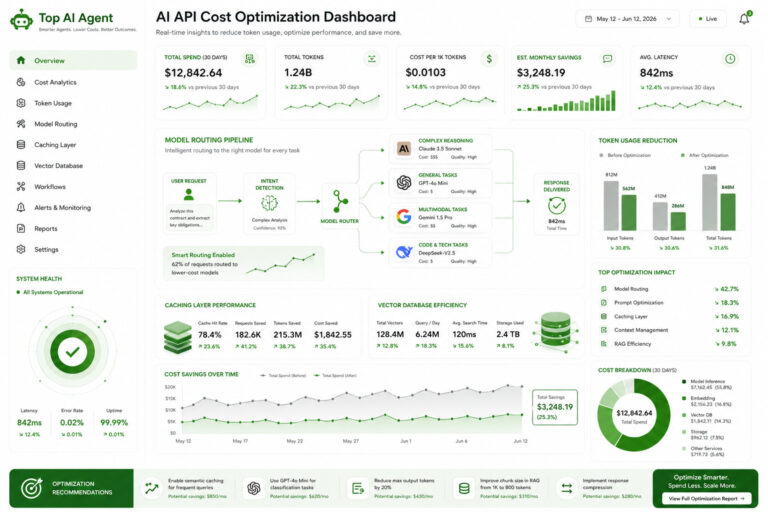

OpenAI API Pricing for Agents

API pricing is one of the most important operational considerations for AI agents.

Agent systems often generate significantly more token usage than traditional chat interfaces because they involve:

- Planning loops

- Tool calls

- Retrieval steps

- Memory injection

- Multi-agent coordination

Major Cost Factors

| Factor | Cost Impact |

|---|---|

| Input tokens | Large prompts increase costs |

| Output tokens | Long reasoning chains add expense |

| Context windows | More memory requires more tokens |

| Tool usage | Additional orchestration overhead |

| Agent retries | Failed loops can multiply spending |

For high-scale products, infrastructure optimization becomes critical.

How Developers Optimize OpenAI Agent Costs

Production teams commonly reduce costs using:

Caching

Frequently reused prompts and outputs are cached to avoid repeated inference calls.

Retrieval-Augmented Generation (RAG)

Instead of sending entire knowledge bases into prompts, vector retrieval injects only relevant context.

Smaller Routing Models

Lightweight models handle simpler orchestration tasks while larger models are reserved for complex reasoning.

Prompt Compression

Reducing unnecessary context lowers token consumption.

Context Management

Agents selectively retain important memory instead of preserving full histories indefinitely.

These techniques can dramatically reduce operational costs at scale.

OpenAI API Latency Considerations

Latency becomes especially important for AI agents because delays accumulate across multiple reasoning steps.

An agent workflow may involve:

- User instruction

- Planning

- Retrieval

- Tool execution

- Additional reasoning

- Final response

Even small delays compound quickly.

Factors Affecting Latency

| Factor | Impact |

|---|---|

| Model size | Larger models are slower |

| Context length | Bigger prompts increase inference time |

| Concurrent workflows | Multiple agents increase load |

| Streaming support | Improves perceived responsiveness |

| Geographic region | Infrastructure distance matters |

Developers often balance:

- Fast orchestration models

- Slower high-reasoning models

This hybrid approach improves both responsiveness and quality.

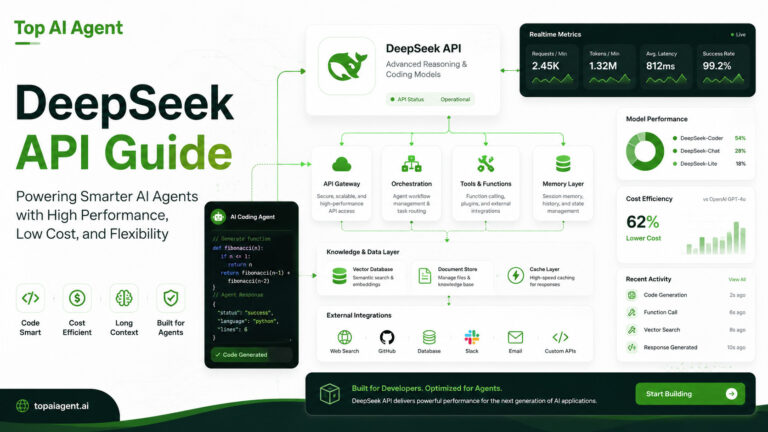

OpenAI API Architecture for AI Agents

A production AI agent stack typically includes more than the OpenAI API itself.

Common Architecture Components

| Layer | Purpose |

|---|---|

| OpenAI API | Core reasoning engine |

| Vector database | Retrieval and memory |

| Backend orchestration | Workflow management |

| Tool execution layer | External actions |

| Monitoring system | Observability and debugging |

| Queue system | Task coordination |

This infrastructure becomes increasingly important as agents grow more autonomous.

OpenAI API vs Other AI Agent APIs

OpenAI vs Anthropic

Anthropic is often preferred for:

- Long-context workflows

- Research-heavy systems

- Enterprise document analysis

OpenAI is frequently chosen for:

- Broad ecosystem support

- Tool integration

- Multimodal applications

- Mature developer tooling

OpenAI vs DeepSeek

DeepSeek has gained traction for:

- Lower-cost inference

- Coding-focused tasks

- Open-weight deployments

OpenAI generally offers:

- More mature infrastructure

- Stronger ecosystem integrations

- Broader enterprise adoption

OpenAI vs Google AI

Google focuses heavily on:

- Enterprise cloud integration

- Multimodal systems

- Workspace connectivity

OpenAI is often viewed as:

- Easier for rapid prototyping

- More developer-centric

- Simpler for startups and independent builders

Best Use Cases for OpenAI AI Agents

The OpenAI API is commonly used for:

Coding Agents

AI systems that write, debug, and explain code.

Enterprise Assistants

Internal knowledge retrieval and workflow automation.

Research Agents

Multi-step information gathering and synthesis.

Customer Support Automation

AI agents capable of tool-assisted support workflows.

Browser and Workflow Agents

Systems that interact with external applications and APIs autonomously.

Challenges and Limitations

Despite its popularity, OpenAI’s API ecosystem also presents challenges.

Cost Scaling

Large-scale autonomous systems can become expensive quickly.

Context Window Tradeoffs

Very large prompts increase both latency and cost.

Vendor Dependency

Closed APIs create platform lock-in concerns.

Reliability Engineering

Agent systems still require extensive monitoring and orchestration safeguards.

Hallucination Risks

Tool-enabled agents can amplify mistakes if not validated carefully.

This is why production AI agents typically combine:

- Guardrails

- Validation layers

- Human oversight

- Structured workflows

Is OpenAI the Best API for AI Agents?

For many developers, OpenAI remains one of the strongest general-purpose choices for AI agent development because of its:

- Mature tooling

- Strong model ecosystem

- Function calling support

- Multimodal capabilities

- Extensive developer adoption

However, the best solution depends on:

- Budget

- Infrastructure strategy

- Latency requirements

- Privacy needs

- Deployment architecture

Many organizations increasingly use hybrid multi-model systems rather than relying on a single provider.

Final Thoughts

The OpenAI API has become a foundational layer for modern AI agents.

Its combination of reasoning models, structured outputs, tool calling, retrieval support, and multimodal processing makes it suitable for a wide range of autonomous systems.

As AI agents continue evolving beyond chat interfaces into full workflow automation platforms, the surrounding infrastructure — orchestration, memory systems, vector retrieval, monitoring, and optimization — will become just as important as the models themselves.

For developers building production AI agents in 2026, understanding how to architect around the OpenAI API is now a core engineering skill.

Key Takeaways

- The OpenAI API is widely used for building AI agents and autonomous workflows.

- Function calling enables agents to interact with tools and external systems.

- Structured outputs improve reliability for automation workflows.

- Long-context support is important for memory and document-heavy tasks.

- AI agent costs increase rapidly without optimization strategies.

- Production agent systems require orchestration, retrieval, monitoring, and backend infrastructure.

- Many developers combine OpenAI with vector databases and custom orchestration frameworks.

- Hybrid multi-model architectures are becoming increasingly common.

FAQ

What is the OpenAI API used for in AI agents?

The OpenAI API is used to power reasoning, tool calling, retrieval workflows, automation systems, and conversational AI agents.

Does OpenAI support function calling?

Yes. OpenAI supports function calling, allowing AI agents to invoke external APIs, tools, and workflows programmatically.

What are OpenAI Assistants?

OpenAI Assistants are managed agent workflows that simplify conversation handling, tool usage, memory, and file interaction.

Is the OpenAI API expensive for agents?

Costs vary depending on token usage, context windows, model size, and workflow complexity. Autonomous agents can become expensive without optimization.

Can OpenAI agents use memory?

Yes. Developers commonly implement memory using retrieval systems, vector databases, and persistent conversation management.

What infrastructure is needed for AI agents?

Most production AI agents require orchestration systems, vector databases, backend services, monitoring tools, and retrieval pipelines.

How does OpenAI compare to Anthropic and DeepSeek?

OpenAI is known for its mature tooling and ecosystem, Anthropic for long-context reasoning, and DeepSeek for lower-cost coding-focused inference.

Can OpenAI APIs be used for self-hosted agents?

The OpenAI API itself is cloud-hosted, but developers can integrate it into hybrid or partially self-hosted AI agent systems.