A practical guide to reducing AI API costs using model routing, caching, retrieval systems, prompt optimization, and infrastructure strategies for scalable AI agents.

As AI agents move from prototypes to production systems, cost optimization has become one of the most important engineering challenges.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

Unlike simple chatbot applications, AI agents continuously generate API calls through:

- Multi-step reasoning

- Retrieval workflows

- Tool execution

- Memory systems

- Autonomous loops

This leads to rapidly increasing token usage and infrastructure costs.

For developers building with APIs from OpenAI, Anthropic, Google, and DeepSeek, understanding cost optimization is now essential.

This guide breaks down practical strategies used by startups and enterprises to reduce AI API costs while maintaining performance.

AI Agent | Table of Contents

Why AI API Costs Increase So Quickly

AI agents are fundamentally different from traditional applications.

Typical Agent Workflow

| Step | API Impact |

|---|---|

| Planning | Initial model call |

| Retrieval | Additional queries |

| Tool execution | External API calls |

| Follow-up reasoning | More inference |

| Memory updates | Extra processing |

A single user request can generate:

5–20+ API calls

At scale, this becomes expensive very quickly.

Key Cost Drivers in AI Systems

Understanding cost drivers is the first step toward optimization.

Major Cost Factors

| Factor | Impact |

|---|---|

| Token usage | Primary cost driver |

| Context size | Larger prompts increase cost |

| Output length | Long responses add expense |

| Agent loops | Recursive reasoning multiplies cost |

| Retrieval workflows | Additional context injection |

| Multimodal inputs | Higher processing cost |

| Concurrent users | Scales total usage |

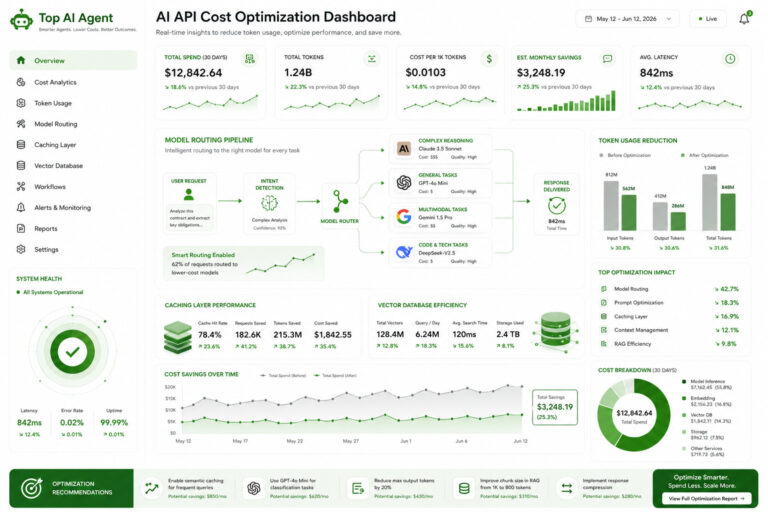

Core API Cost Optimization Strategies

1. Model Routing (Most Important)

Not every task requires a large model.

Strategy

Use:

- Smaller models → simple tasks

- Larger models → complex reasoning

Example

| Task | Model Type |

|---|---|

| Classification | Small model |

| Simple Q&A | Mid-tier model |

| Complex reasoning | Large model |

Impact

- Reduces cost significantly

- Maintains performance

- Improves scalability

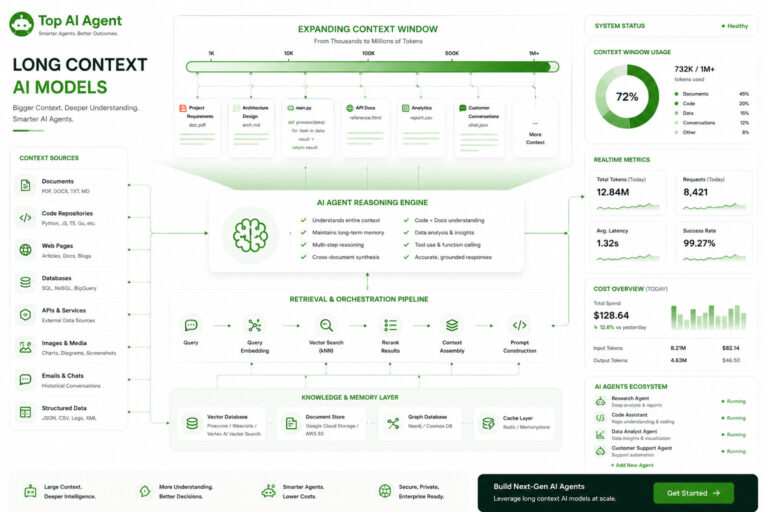

2. Retrieval-Augmented Generation (RAG)

Instead of sending large datasets to the model, use retrieval systems.

How It Works

- Store data in vector databases

- Retrieve relevant chunks

- Inject into prompt

Benefits

- Reduces token usage

- Improves accuracy

- Scales efficiently

3. Prompt Optimization

Prompts often contain unnecessary information.

Techniques

- Remove redundant instructions

- Use structured inputs

- Minimize verbosity

- Use templates

Example

Instead of:

“Please carefully analyze and respond…”

Use:

“Analyze and respond.”

Impact

- Lower token usage

- Faster responses

- Reduced cost

4. Output Control

Limit unnecessary output generation.

Methods

- Set max token limits

- Use concise response formats

- Request structured outputs

Example

Instead of:

“Explain in detail…”

Use:

“Provide a 3-sentence summary.”

5. Caching (High ROI)

Many AI requests are repetitive.

What to Cache

- Frequent queries

- Static responses

- Embeddings

- Retrieval results

Impact

- Eliminates redundant API calls

- Reduces latency

- Improves system efficiency

6. Context Management

Sending full conversation history is expensive.

Strategies

- Summarize older messages

- Keep only relevant context

- Use memory tiers

Example

| Approach | Cost |

|---|---|

| Full history | High |

| Summarized memory | Low |

7. Reduce Agent Loops

Poorly designed agents can:

- retry unnecessarily

- loop indefinitely

- reprocess the same data

Fixes

- Add retry limits

- Track state

- validate outputs

- avoid redundant calls

8. Parallel vs Sequential Execution

Optimize workflow execution.

Strategy

- Run independent steps in parallel

- Avoid unnecessary sequential calls

Impact

- Reduces latency

- Improves efficiency

- lowers cost indirectly

9. Use Embedding Optimization

Embeddings power retrieval systems.

Tips

- Cache embeddings

- Avoid re-embedding unchanged data

- batch embedding requests

Impact

- Lower storage and compute cost

- Faster retrieval

10. Hybrid Infrastructure (Advanced)

Combine:

- Cloud APIs

- Self-hosted models

Strategy

| Task | Deployment |

|---|---|

| Sensitive data | Local |

| Heavy reasoning | Cloud |

| Simple tasks | Local |

Benefits

- Reduces API costs

- Improves control

- optimizes performance

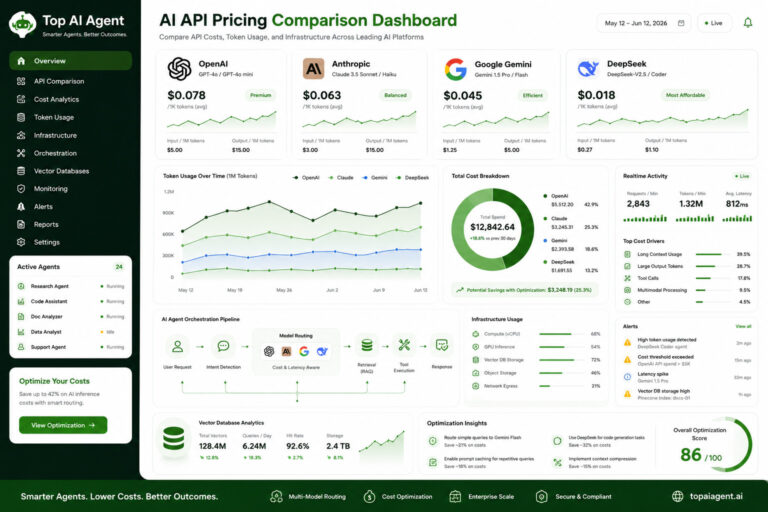

Cost Optimization by Provider

OpenAI

Optimization Focus

- Model routing

- prompt compression

- caching

- streaming

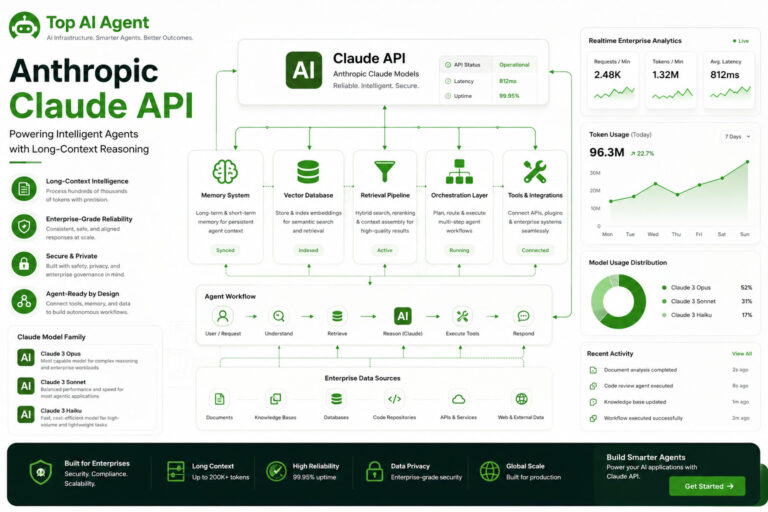

Anthropic Claude

Anthropic

Optimization Focus

- context reduction

- document chunking

- RAG workflows

Google Gemini

Optimization Focus

- cloud resource efficiency

- infrastructure optimization

- workload distribution

DeepSeek

DeepSeek

Optimization Focus

- cost-efficient inference

- coding workflows

- batch processing

Real-World Cost Optimization Example

Without Optimization

| Metric | Value |

|---|---|

| API calls per request | 12 |

| Tokens per request | 25K |

| Monthly cost | High |

With Optimization

| Metric | Value |

|---|---|

| API calls per request | 4–6 |

| Tokens per request | 8K |

| Monthly cost | Reduced significantly |

Hidden Infrastructure Costs

API cost is only part of the equation.

Additional Cost Layers

| Layer | Cost Area |

|---|---|

| Vector database | storage + queries |

| Backend systems | compute |

| Monitoring tools | observability |

| Retrieval systems | indexing |

| Cloud infrastructure | networking |

Common Cost Optimization Mistakes

1. Overusing Large Models

Using high-end models for simple tasks.

2. Ignoring Context Size

Sending unnecessary data.

3. No Caching

Repeating identical requests.

4. Poor Agent Design

Inefficient workflows and loops.

5. No Monitoring

Lack of cost visibility.

Cost Optimization Stack for AI Agents

Recommended Architecture

| Layer | Optimization Strategy |

|---|---|

| Model layer | Routing + selection |

| Retrieval layer | RAG + indexing |

| Backend layer | orchestration efficiency |

| Memory layer | summarization |

| Monitoring | cost tracking |

When to Optimize Costs

Cost optimization becomes critical when:

- scaling to production

- handling large user bases

- running multi-agent systems

- using long-context models

- operating continuously

The Future of AI Cost Optimization

AI systems are becoming more infrastructure-heavy.

Future trends include:

- dynamic model routing

- cost-aware AI systems

- automated optimization pipelines

- hybrid cloud-local architectures

- inference optimization techniques

The focus is shifting from:

“best model”

to:

“most efficient system”

Final Thoughts

AI API cost optimization is no longer optional—it’s a core part of building scalable AI systems.

As AI agents grow more complex, costs can quickly spiral without proper architecture.

The most effective teams focus on:

- efficient workflows

- smart model selection

- retrieval systems

- context management

- infrastructure optimization

In modern AI development, efficiency is just as important as intelligence.

Key Takeaways

- AI agents significantly increase API usage compared to simple chat apps.

- Token usage is the primary cost driver.

- Model routing is the most impactful optimization strategy.

- Retrieval systems reduce cost and improve performance.

- Caching eliminates redundant API calls.

- Context management is essential for scalability.

- Hybrid architectures can reduce long-term costs.

- Cost optimization is a core AI engineering discipline.

FAQ

What is AI API cost optimization?

It refers to strategies used to reduce token usage, API calls, and infrastructure costs in AI systems.

What is the biggest cost driver?

Token usage is the primary cost factor.

How can I reduce AI API costs?

Use model routing, caching, prompt optimization, and retrieval systems.

What is model routing?

It involves using different models based on task complexity.

Does long context increase cost?

Yes. Larger prompts significantly increase token usage and cost.

What is RAG in cost optimization?

Retrieval-Augmented Generation reduces prompt size by injecting only relevant data.

Is caching useful for AI?

Yes. It eliminates redundant API calls and reduces cost.

Can I reduce costs with self-hosted models?

Yes, but it introduces infrastructure complexity.