A practical guide to the Anthropic Claude API for AI agents, covering long-context reasoning, enterprise workflows, pricing considerations, infrastructure design, and production deployment strategies.

As AI agents become more capable, developers are increasingly looking beyond raw model intelligence and focusing on reliability, long-context reasoning, workflow orchestration, and enterprise usability.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

One API platform that has gained significant traction in these areas is Anthropic and its Claude model ecosystem.

Claude models are widely used for:

- Long-document analysis

- Research workflows

- Enterprise assistants

- AI knowledge systems

- Multi-step reasoning tasks

- Agentic automation systems

For many developers and enterprises, the Anthropic Claude API has become a strong alternative to other major AI model providers because of its context handling, conversational stability, and enterprise-focused approach.

This guide explains how the Claude API works for AI agents, including core features, pricing considerations, infrastructure strategies, strengths, limitations, and practical deployment advice.

How to Build an AI Agent (Step-by-Step Guide)

AI Agent | Table of Contents

What Is the Anthropic Claude API?

The Anthropic Claude API provides developers with access to large language models designed for:

- Reasoning

- Long-context processing

- Conversational AI

- Enterprise automation

- Retrieval workflows

- AI agent systems

Claude models are particularly known for:

- Extended context windows

- Reliable instruction following

- Long-form analysis

- Stable conversational behavior

This makes them especially useful for AI agents that need to process:

- Large knowledge bases

- Technical documentation

- Research material

- Enterprise records

- Multi-step workflows

Why Developers Use Claude for AI Agents

Modern AI agents often need to:

- Maintain long memory

- Analyze extensive documents

- Coordinate workflows

- Retrieve contextual information

- Reason across multiple tasks

Many AI APIs perform well in short conversational tasks but become less reliable in large-context workflows.

Claude has become popular because it is frequently used for:

- Long-form reasoning

- Enterprise assistants

- Retrieval-heavy systems

- Research automation

- Persistent conversational workflows

For organizations building knowledge-centric AI agents, context reliability is often more important than benchmark performance alone.

Core Anthropic Claude API Features

Long Context Windows

One of Claude’s most widely discussed features is long-context support.

Large context windows allow AI agents to:

- Process lengthy documents

- Analyze contracts

- Read code repositories

- Maintain extended conversations

- Handle research workflows

Common Use Cases

| Workflow | Why Long Context Matters |

|---|---|

| Legal analysis | Large document review |

| Enterprise search | Multi-document reasoning |

| Coding agents | Repository understanding |

| Research assistants | Persistent memory |

| Internal copilots | Organizational knowledge retrieval |

Long-context support is especially valuable for AI agents operating inside enterprise environments.

Strong Conversational Stability

Claude models are often selected for:

- Stable responses

- Reliable formatting

- Consistent instruction adherence

- Lower conversational drift

This matters for production AI agents because unreliable outputs can break:

- Automation systems

- Tool pipelines

- Workflow orchestration

- Structured reasoning chains

Developers frequently prioritize predictability over raw creativity in enterprise workflows.

Retrieval-Augmented Generation (RAG) Workflows

Claude APIs are commonly integrated into RAG systems.

Retrieval-augmented generation allows AI agents to:

- Search external knowledge

- Inject relevant context dynamically

- Reduce hallucinations

- Avoid oversized prompts

Most production Claude agents combine:

- Vector databases

- Retrieval pipelines

- Memory systems

- Context management layers

This architecture improves both scalability and reliability.

Enterprise AI Workflows

Claude APIs are frequently used for:

- Internal enterprise assistants

- Knowledge retrieval systems

- Customer support automation

- Compliance workflows

- Document-heavy automation

Organizations often value:

- Conversational reliability

- Long-context handling

- Safer response behavior

more than pure coding performance.

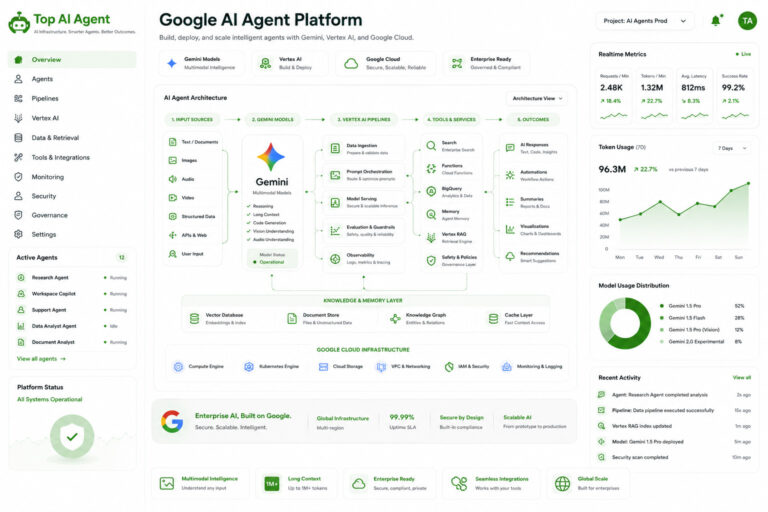

Claude API for AI Agent Systems

AI agents require much more than simple prompt-response interactions.

Production systems often involve:

- Memory

- Retrieval

- Tool execution

- Workflow orchestration

- State management

- Backend infrastructure

Claude APIs are commonly integrated into these larger systems.

Typical AI Agent Architecture

| Infrastructure Layer | Purpose |

|---|---|

| Claude API | Reasoning and language understanding |

| Vector database | Retrieval and memory |

| Orchestration layer | Workflow coordination |

| Backend system | State persistence |

| Monitoring tools | Observability and debugging |

| Tool execution layer | External actions |

This modular architecture has become increasingly common in enterprise AI systems.

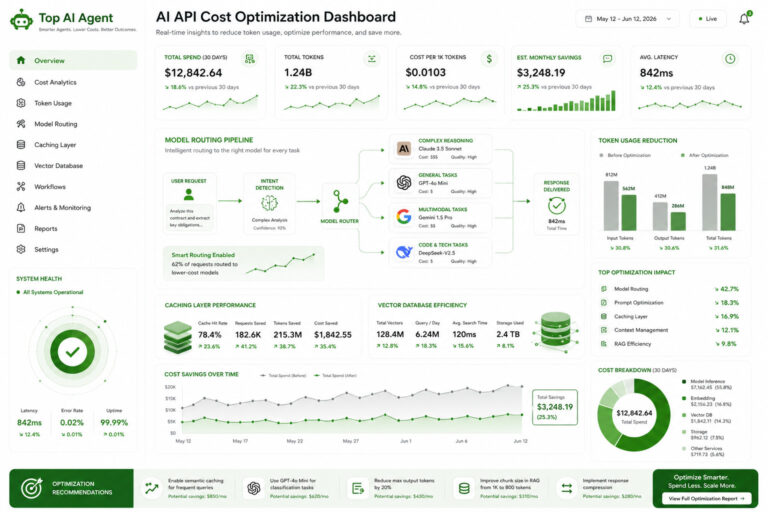

Claude API Pricing Considerations

Pricing is one of the most important operational concerns for AI agents.

Autonomous systems often generate:

- Large prompts

- Long outputs

- Multi-step reasoning loops

- Continuous retrieval calls

This can significantly increase inference costs.

Major Cost Factors

| Factor | Cost Impact |

|---|---|

| Context size | Larger prompts increase spending |

| Output length | Long reasoning chains cost more |

| Retrieval workflows | Additional context injection |

| Agent loops | Recursive execution increases usage |

| Multi-agent systems | Parallel processing overhead |

Long-context models can become expensive without proper optimization strategies.

How Developers Optimize Claude API Costs

Retrieval Systems

Instead of sending entire documents repeatedly, vector retrieval injects only relevant context.

Context Compression

Agents summarize older conversation history instead of preserving everything indefinitely.

Hybrid Model Routing

Smaller models handle lightweight orchestration tasks while larger reasoning models handle complex analysis.

Caching

Frequently reused prompts and outputs are stored to reduce repeated inference calls.

Selective Memory Retention

Only high-value information is persisted long term.

These optimization techniques are essential for production AI systems.

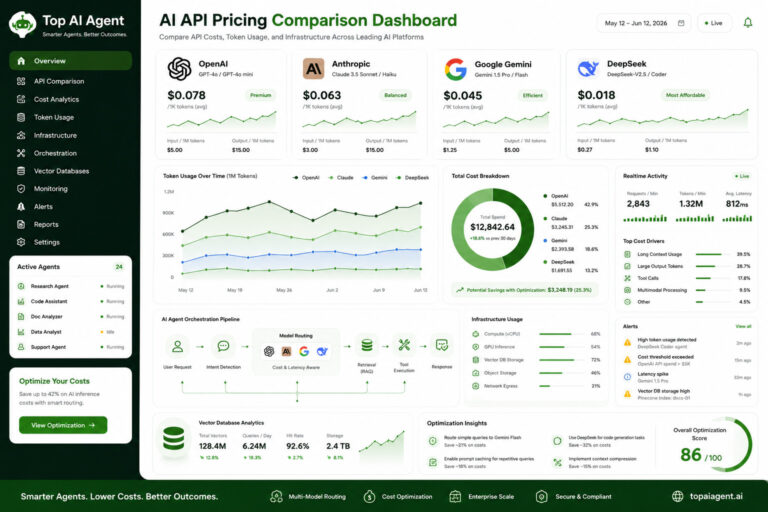

Claude API vs OpenAI

OpenAI and Anthropic are often compared in enterprise AI development.

Claude Strengths

| Area | Advantage |

|---|---|

| Long-context workflows | Strong document handling |

| Conversational consistency | Stable responses |

| Enterprise analysis | Useful for research and compliance |

| Retrieval-heavy systems | Strong contextual understanding |

OpenAI Strengths

| Area | Advantage |

|---|---|

| Broader ecosystem | Larger developer tooling |

| Multimodal support | More mature integrations |

| Tooling infrastructure | Strong API ecosystem |

| Real-time applications | Advanced realtime capabilities |

Many organizations use both providers together in hybrid AI systems.

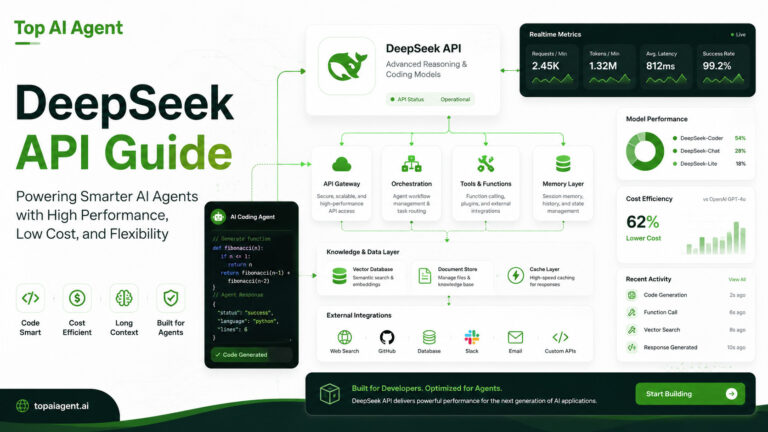

Claude API vs DeepSeek

DeepSeek is commonly evaluated for:

- Coding tasks

- Lower-cost inference

- Open ecosystem flexibility

Claude is often preferred for:

- Enterprise assistants

- Long-form reasoning

- Knowledge-heavy workflows

- Document analysis

The choice depends heavily on:

- Workflow complexity

- Cost sensitivity

- Infrastructure strategy

- Enterprise requirements

Claude API Latency Considerations

Latency becomes increasingly important in AI agents because workflows involve multiple sequential steps.

A typical agent workflow may include:

- Planning

- Retrieval

- Tool execution

- Additional reasoning

- Response generation

Long-context reasoning can increase inference time significantly.

Factors Affecting Latency

| Factor | Impact |

|---|---|

| Context size | Larger prompts increase processing time |

| Model complexity | Bigger models are slower |

| Retrieval pipelines | Additional orchestration overhead |

| Concurrent workloads | Multiple agents increase demand |

| Streaming support | Improves perceived responsiveness |

Developers often balance:

- Long-context reliability

- Response speed

- Infrastructure costs

Claude API for Enterprise AI Systems

Claude has become especially relevant for enterprise AI deployment.

Organizations commonly use Claude-powered agents for:

- Internal knowledge retrieval

- Policy analysis

- Compliance workflows

- Enterprise search

- Research automation

- Documentation assistants

These environments prioritize:

- Accuracy

- Stability

- Context retention

- Governance

- Reliability

over purely experimental capabilities.

Vector Databases and Claude Agents

Vector databases remain essential for scalable AI agent systems.

They allow Claude-powered agents to:

- Store embeddings

- Retrieve semantic context

- Maintain memory

- Search enterprise information efficiently

This is especially important for:

- Long-running workflows

- Persistent assistants

- Organizational knowledge systems

Popular RAG architectures typically combine:

- Claude models

- Vector retrieval

- Orchestration frameworks

- Backend workflow systems

Challenges and Limitations

Despite its strengths, Claude also presents several tradeoffs.

Higher Costs for Large Context Workflows

Long-context reasoning can become expensive at scale.

Smaller Ecosystem Compared to OpenAI

OpenAI still maintains a larger developer ecosystem and broader third-party integrations.

Tooling Complexity

Advanced agent systems still require:

- Orchestration layers

- Validation systems

- Monitoring infrastructure

- Guardrails

Claude alone does not solve these architectural challenges.

Vendor Dependency

Like most hosted AI APIs, enterprises must consider:

- Vendor lock-in

- Governance requirements

- Infrastructure flexibility

Best Use Cases for Claude AI Agents

Claude APIs are particularly effective for:

Research Agents

Long-form analysis and synthesis workflows.

Enterprise Knowledge Systems

Internal assistants connected to organizational data.

Legal and Compliance Automation

Document-heavy reasoning workflows.

Retrieval-Augmented AI Systems

Knowledge retrieval and semantic search applications.

Long-Context Copilots

Assistants that maintain extended conversational memory.

Is Anthropic Claude Good for AI Agents?

For many developers and enterprises, Claude has become one of the strongest APIs for:

- Long-context reasoning

- Stable conversational workflows

- Enterprise AI systems

- Retrieval-heavy applications

Its strengths are especially visible in:

- Document analysis

- Research automation

- Knowledge retrieval

- Persistent conversational systems

However, the best AI API depends on:

- Budget

- Infrastructure design

- Workflow complexity

- Deployment strategy

- Latency requirements

Increasingly, organizations are building multi-model AI architectures rather than relying on a single provider.

Final Thoughts

The Anthropic Claude API has established itself as an important platform for enterprise AI agents and long-context reasoning systems.

Its focus on contextual understanding, stable conversational behavior, and retrieval-friendly workflows makes it particularly useful for organizations building knowledge-centric AI systems.

As AI agents evolve into sophisticated operational platforms involving orchestration, memory, retrieval, and autonomous workflows, infrastructure quality and architectural decisions will become increasingly important.

For developers evaluating AI agent APIs in 2026, Claude is now a major part of that conversation.

Key Takeaways

- Claude APIs are widely used for long-context AI agent workflows.

- Anthropic is particularly strong in enterprise and document-heavy systems.

- Retrieval-augmented generation (RAG) is commonly paired with Claude models.

- Long-context reasoning improves research and knowledge workflows.

- AI agent infrastructure requires orchestration, memory, monitoring, and backend systems.

- Claude is often compared with OpenAI and DeepSeek for enterprise AI deployments.

- Cost optimization becomes critical in large-scale agent systems.

- Multi-model AI architectures are becoming increasingly common.

FAQ

What is the Anthropic Claude API?

The Claude API provides access to Anthropic’s language models for AI agents, enterprise assistants, retrieval systems, and long-context workflows.

Why is Claude popular for AI agents?

Claude is widely used for its long-context reasoning, conversational reliability, and enterprise-friendly workflows.

Does Claude support long-context processing?

Yes. Claude models are commonly used for analyzing large documents, research workflows, and persistent conversational systems.

How does Claude compare to OpenAI?

Claude is often preferred for long-form reasoning and enterprise workflows, while OpenAI offers a broader tooling ecosystem and stronger multimodal infrastructure.

Can Claude be used with vector databases?

Yes. Claude APIs are frequently integrated into retrieval-augmented generation (RAG) systems using vector databases.

What infrastructure is needed for Claude AI agents?

Production systems typically require vector databases, orchestration frameworks, backend systems, monitoring tools, and retrieval pipelines.

Is Claude good for enterprise AI systems?

Yes. Claude is commonly used for enterprise search, policy analysis, compliance workflows, and organizational knowledge assistants.

Are Claude APIs expensive?

Costs depend on context length, output size, retrieval workflows, and workflow complexity. Long-context systems can become expensive without optimization.

Post Excerpt

A practical guide to the Anthropic Claude API for AI agents, covering long-context reasoning, enterprise workflows, retrieval systems, pricing, and infrastructure best practices