A practical developer guide to the DeepSeek API, including model capabilities, pricing considerations, coding workflows, long-context support, and infrastructure strategies for AI agents.

As AI agent development expands beyond basic chatbots into autonomous workflows, developers are increasingly evaluating alternative model providers that offer lower costs, strong reasoning performance, and flexible deployment options.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

Among the newer entrants attracting attention in the AI infrastructure ecosystem is DeepSeek.

DeepSeek has gained traction for its coding-focused models, competitive inference pricing, and growing support within open-weight and self-hosted AI ecosystems. For startups, independent developers, and infrastructure teams building AI agents, DeepSeek APIs are becoming a practical alternative to more expensive commercial platforms.

This guide explains how the DeepSeek API works, its major features, model strengths, pricing considerations, AI agent use cases, and implementation best practices.

How to Build an AI Agent (Step-by-Step Guide)

AI Agent | Table of Contents

What Is the DeepSeek API?

The DeepSeek API provides access to large language models optimized for:

- Reasoning

- Coding

- Long-context processing

- AI automation workflows

- Agent-based systems

Developers commonly use DeepSeek APIs for:

- Coding assistants

- Autonomous AI agents

- Research systems

- Workflow automation

- Retrieval-augmented generation (RAG)

- Multi-step reasoning pipelines

DeepSeek has become particularly popular among developers seeking:

- Lower API costs

- Open ecosystem flexibility

- Strong code generation performance

- Self-hosted deployment compatibility

Why Developers Are Using DeepSeek for AI Agents

AI agents often require:

- Continuous inference

- Multi-step planning

- Tool calling

- Long-running workflows

- Retrieval systems

This can create substantial infrastructure costs when using premium frontier models exclusively.

DeepSeek is increasingly evaluated because it offers:

- Competitive pricing

- Efficient coding models

- Reasoning capabilities suitable for automation

- Growing compatibility with open-source frameworks

For many teams, DeepSeek is used either:

- As a primary agent model

- As a secondary routing model within hybrid AI systems

Core DeepSeek API Features

Coding and Software Engineering Capabilities

DeepSeek models are widely discussed for software engineering tasks.

Common use cases include:

- Code generation

- Debugging

- Refactoring

- Documentation

- Test creation

- AI coding copilots

Many developers compare DeepSeek against:

- OpenAI coding models

- Anthropic Claude

- Open-weight coding models

The API is often used in:

- Developer assistants

- IDE integrations

- Autonomous coding agents

- DevOps automation systems

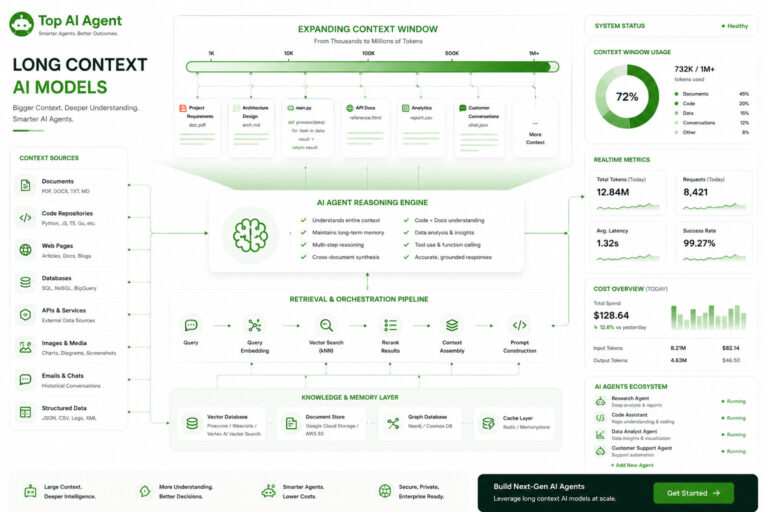

Long Context Support

Long context handling is important for AI agents that work with:

- Large repositories

- Technical documentation

- Multi-step reasoning chains

- Enterprise knowledge bases

DeepSeek models are increasingly evaluated for:

- Large codebase analysis

- Research agents

- Multi-document workflows

- Retrieval-heavy systems

However, developers still commonly pair long-context models with vector retrieval systems to reduce:

- Latency

- Token usage

- Infrastructure costs

Reasoning Workflows

AI agents require more than simple text generation.

Modern autonomous systems must:

- Plan actions

- Evaluate context

- Use tools

- Manage memory

- Coordinate workflows

DeepSeek APIs are often integrated into:

- Agent orchestration frameworks

- Task automation systems

- Research pipelines

- Backend AI services

Some developers use DeepSeek as:

- A planning layer

- A coding specialist

- A lower-cost inference route

API Integration Flexibility

Developers commonly integrate DeepSeek APIs with:

- LangChain

- LlamaIndex

- Vector databases

- Workflow orchestration tools

- AI backend systems

This flexibility is important for teams building custom AI infrastructure stacks rather than relying entirely on managed ecosystems.

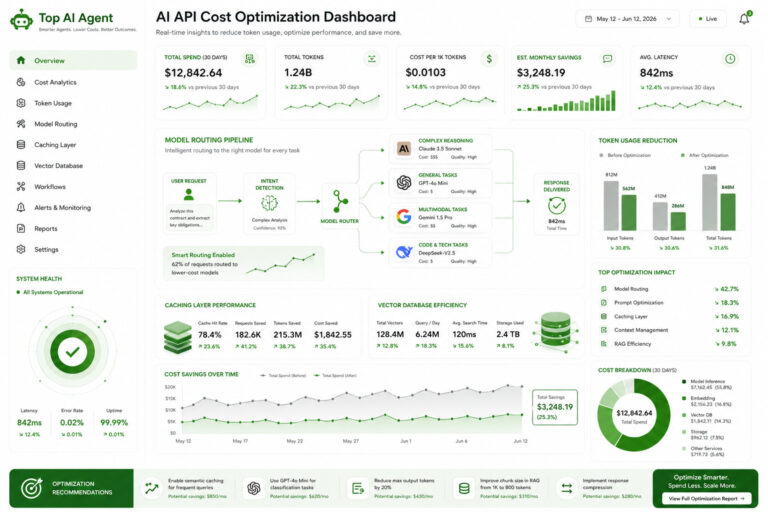

DeepSeek API Pricing

Pricing is one of the biggest reasons developers evaluate DeepSeek.

AI agent systems can generate extremely high token usage because they often involve:

- Recursive reasoning

- Tool execution loops

- Memory retrieval

- Long-context processing

- Multi-agent coordination

Lower-cost inference can significantly reduce operational expenses.

Why Pricing Matters for AI Agents

| Workflow Component | Cost Impact |

|---|---|

| Long prompts | Higher input token usage |

| Large outputs | Increased inference expense |

| Tool retries | Repeated API calls |

| Multi-agent systems | Parallel token consumption |

| Retrieval workflows | Additional context injection |

For startups and experimental products, pricing efficiency can determine whether an AI workflow remains economically sustainable.

DeepSeek API vs OpenAI

OpenAI remains the dominant ecosystem for general-purpose AI agents, but DeepSeek offers several practical advantages for certain workloads.

Areas Where DeepSeek Is Often Preferred

| Strength | Why Developers Choose It |

|---|---|

| Lower inference costs | More affordable scaling |

| Coding workflows | Strong software engineering tasks |

| Open ecosystem alignment | Easier hybrid deployment strategies |

| Self-hosted flexibility | Useful for infrastructure teams |

Areas Where OpenAI Still Leads

| Area | Advantage |

|---|---|

| Ecosystem maturity | Larger developer platform |

| Tooling | Stronger managed APIs |

| Enterprise integrations | Broader adoption |

| Multimodal systems | More mature infrastructure |

Many organizations now use hybrid architectures where DeepSeek handles:

- Coding tasks

- Lightweight orchestration

- Background reasoning

While OpenAI handles:

- High-complexity reasoning

- Multimodal workflows

- Premium user-facing interactions

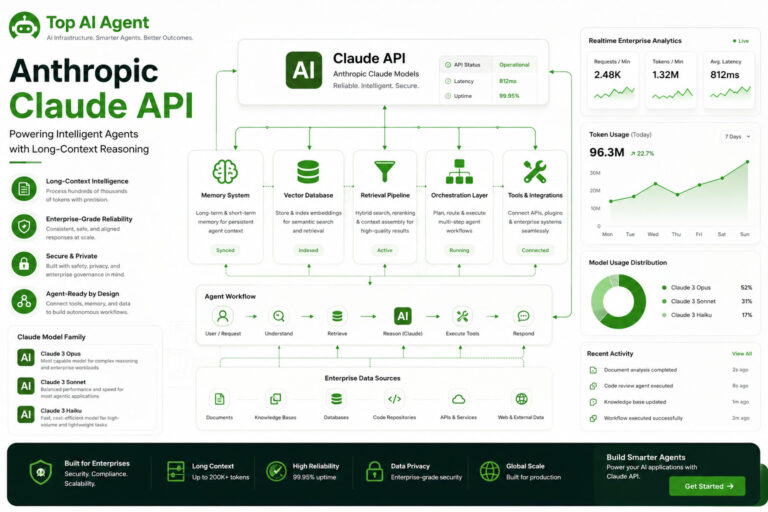

DeepSeek API vs Anthropic Claude

Anthropic is commonly chosen for:

- Long-context reasoning

- Enterprise workflows

- Document-heavy tasks

DeepSeek is frequently evaluated for:

- Cost efficiency

- Coding tasks

- Open infrastructure compatibility

The decision often depends on:

- Workflow type

- Budget

- Infrastructure strategy

- Latency requirements

Using DeepSeek for AI Agent Systems

DeepSeek APIs are increasingly used in production agent workflows.

Common AI Agent Architectures

| Layer | Example Role |

|---|---|

| DeepSeek API | Reasoning and code generation |

| Vector database | Retrieval and memory |

| Orchestration framework | Workflow coordination |

| Backend system | State management |

| Tool execution layer | External actions |

This modular approach allows developers to combine multiple systems together rather than relying on a single monolithic platform.

Vector Databases and DeepSeek Agents

Vector databases are essential for retrieval-based AI agents.

They help agents:

- Store embeddings

- Retrieve memory

- Search enterprise data

- Maintain contextual awareness

DeepSeek-powered agents are often paired with:

- RAG pipelines

- Knowledge systems

- Semantic search infrastructure

- Long-term memory frameworks

This reduces the need to repeatedly send large prompts directly into the model.

DeepSeek and Self-Hosted AI Infrastructure

One reason DeepSeek receives attention is its alignment with self-hosted and open-weight AI ecosystems.

Many organizations are exploring:

- Hybrid deployments

- Private inference systems

- Local GPU infrastructure

- Air-gapped enterprise AI environments

Benefits of Self-Hosted AI Agents

- Better data control

- Reduced long-term costs

- Infrastructure customization

- Lower vendor dependency

Challenges

- GPU management

- Scaling complexity

- Monitoring overhead

- Inference optimization requirements

For many teams, DeepSeek becomes part of a broader infrastructure strategy rather than a standalone API decision.

DeepSeek API Latency Considerations

Latency becomes increasingly important in autonomous AI systems because agents often execute multiple sequential tasks.

An AI agent may:

- Plan

- Retrieve memory

- Execute tools

- Analyze responses

- Continue reasoning

Even small delays can compound significantly.

Factors Affecting DeepSeek API Latency

| Factor | Impact |

|---|---|

| Model size | Larger reasoning models are slower |

| Context length | Bigger prompts increase latency |

| Infrastructure provider | Hosting quality varies |

| Concurrent workloads | Parallel agents increase demand |

| Streaming support | Improves responsiveness |

Developers often optimize latency using:

- Smaller orchestration models

- Retrieval systems

- Prompt compression

- Inference caching

DeepSeek API Best Practices

Use Model Routing

Not every task requires a large reasoning model.

Many systems route:

- Simple tasks → smaller models

- Complex reasoning → larger models

Combine with Retrieval Systems

Retrieval-augmented generation (RAG) helps reduce:

- Token usage

- Latency

- Context overload

Implement Guardrails

AI agents should include:

- Validation systems

- Retry logic

- Permission controls

- Output filtering

Monitor Infrastructure Costs

Production AI agents can scale rapidly.

Track:

- Token usage

- API requests

- Latency

- Failure rates

- Tool execution loops

Challenges and Limitations

Despite growing popularity, DeepSeek APIs also present tradeoffs.

Ecosystem Maturity

The surrounding tooling ecosystem is still smaller than OpenAI’s broader platform.

Enterprise Readiness

Some organizations may require:

- Compliance tooling

- Governance controls

- Enterprise SLAs

that are more mature in competing ecosystems.

Infrastructure Variability

Performance can vary depending on:

- Hosting provider

- Deployment method

- Regional infrastructure quality

Rapid Market Changes

The AI infrastructure ecosystem is evolving quickly, making long-term platform selection challenging.

Is DeepSeek a Good API for AI Agents?

For many developers, DeepSeek offers a strong balance between:

- Cost efficiency

- Coding performance

- Infrastructure flexibility

- AI agent compatibility

It is especially useful for:

- Coding assistants

- Experimental agent systems

- Self-hosted AI stacks

- Budget-sensitive deployments

However, the best API depends on:

- Workflow complexity

- Enterprise requirements

- Latency tolerance

- Infrastructure strategy

- Security needs

Increasingly, organizations are moving toward multi-model architectures rather than relying on a single AI provider.

Final Thoughts

The DeepSeek API is becoming an important part of the broader AI agent infrastructure ecosystem.

Its lower-cost inference, coding-focused capabilities, and compatibility with flexible deployment strategies make it increasingly attractive for developers building scalable AI systems.

As AI agents evolve into complex orchestration platforms involving memory, retrieval, workflow automation, and backend coordination, infrastructure decisions will matter just as much as raw model performance.

For developers evaluating AI agent APIs in 2026, DeepSeek is now part of that conversation.

Key Takeaways

- DeepSeek APIs are increasingly used for AI agents and coding workflows.

- Lower inference pricing makes DeepSeek attractive for scalable automation systems.

- DeepSeek is commonly used for coding assistants, orchestration workflows, and research agents.

- Long-context support is useful for large repositories and enterprise workflows.

- Vector databases remain important for memory and retrieval systems.

- Hybrid multi-model architectures are becoming increasingly common.

- Self-hosted AI infrastructure is a growing use case for DeepSeek-powered systems.

- Production AI agents require orchestration, monitoring, and infrastructure optimization.

FAQ

What is the DeepSeek API?

The DeepSeek API provides access to AI models focused on reasoning, coding, automation workflows, and AI agent systems.

Is DeepSeek good for coding agents?

Yes. DeepSeek is widely evaluated for code generation, debugging, documentation, and autonomous coding workflows.

How does DeepSeek compare to OpenAI?

DeepSeek is often viewed as more cost-efficient for coding and automation workloads, while OpenAI offers a larger ecosystem and broader tooling support.

Can DeepSeek be used for AI agents?

Yes. Developers commonly use DeepSeek APIs for autonomous workflows, retrieval systems, orchestration pipelines, and AI automation.

Does DeepSeek support long-context workflows?

DeepSeek models are increasingly used for long-context tasks such as repository analysis, document workflows, and research systems.

What infrastructure is needed for DeepSeek agents?

Most production systems require orchestration frameworks, vector databases, backend services, monitoring tools, and retrieval pipelines.

Are DeepSeek APIs suitable for enterprises?

That depends on compliance, governance, infrastructure requirements, and deployment strategy. Some enterprises may require additional operational tooling.

Can DeepSeek be used in self-hosted AI systems?

Yes. DeepSeek is frequently discussed within open-weight and self-hosted AI infrastructure ecosystems.