A detailed look at the leading AI agent APIs, model platforms, infrastructure stacks, and backend systems developers are using to build modern autonomous AI workflows.

Artificial intelligence agents are quickly moving from experimental projects to production software. Companies are now building AI systems that can reason across long conversations, call tools, retrieve external data, automate workflows, and coordinate multi-step tasks with minimal human intervention.

At the center of this shift is a growing ecosystem of AI agent APIs and platforms. These tools provide the underlying models, orchestration layers, inference systems, vector databases, and infrastructure required to build scalable AI agents.

This guide explains the major AI agent APIs and platforms available in 2026, how they differ, and what developers should evaluate before choosing a stack.

AI Agent | Table of Contents

What Are AI Agent APIs and Platforms?

AI agent APIs are interfaces that allow developers to connect applications to large language models (LLMs), reasoning systems, embeddings, retrieval systems, and multimodal AI capabilities.

AI agent platforms go beyond model access. They typically include:

- Agent orchestration frameworks

- Tool calling systems

- Memory and retrieval infrastructure

- Workflow automation

- Long-context processing

- Multi-agent coordination

- Observability and monitoring

- Deployment infrastructure

Together, these systems form the operational layer behind AI assistants, coding agents, research agents, customer support agents, and autonomous workflow systems.

Best AI Agent Builders & Tools (2026) – The Ultimate Guide

Why AI Agent Infrastructure Matters

Building an AI chatbot is relatively straightforward. Building a reliable AI agent system is significantly more complex.

Modern agents require:

| Requirement | Why It Matters |

|---|---|

| Long context windows | Needed for memory and large workflows |

| Tool calling | Allows agents to interact with APIs and software |

| Fast inference | Reduces latency during autonomous tasks |

| Vector retrieval | Enables contextual memory and RAG |

| Cost optimization | Agent loops can become expensive quickly |

| Multi-model orchestration | Different models are better at different tasks |

| Observability | Important for debugging and reliability |

This is why AI infrastructure decisions are becoming as important as model selection itself.

Best AI Agent APIs & Platforms in 2026

OpenAI API

OpenAI remains one of the most widely adopted platforms for AI agent development.

Its ecosystem includes:

- GPT models

- Function calling

- Structured outputs

- Retrieval integrations

- Realtime APIs

- Multimodal processing

- Agent orchestration tooling

OpenAI models are commonly used for:

- Coding agents

- Enterprise copilots

- Research assistants

- Workflow automation

- Browser agents

Strengths

- Strong reasoning performance

- Mature developer ecosystem

- Broad third-party integrations

- Reliable documentation

- Good multimodal support

Limitations

- Higher API costs at scale

- Rate limits for some tiers

- Closed-source ecosystem

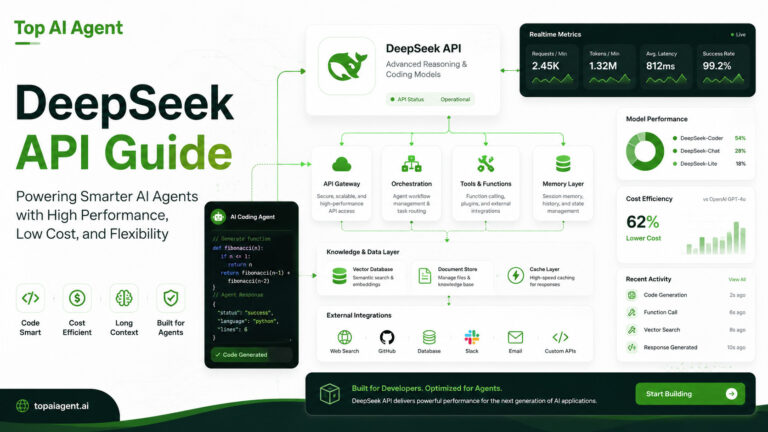

DeepSeek API

DeepSeek has become increasingly popular among developers seeking lower-cost reasoning models and coding-focused AI systems.

DeepSeek models are often evaluated for:

- Code generation

- Agentic reasoning

- Long-context workflows

- Budget-sensitive deployments

Why Developers Use It

- Competitive pricing

- Strong coding capabilities

- Open-weight ecosystem support

- Suitable for self-hosted deployments

Tradeoffs

- Smaller ecosystem than OpenAI

- Infrastructure maturity varies by provider

- Enterprise governance features are still evolving

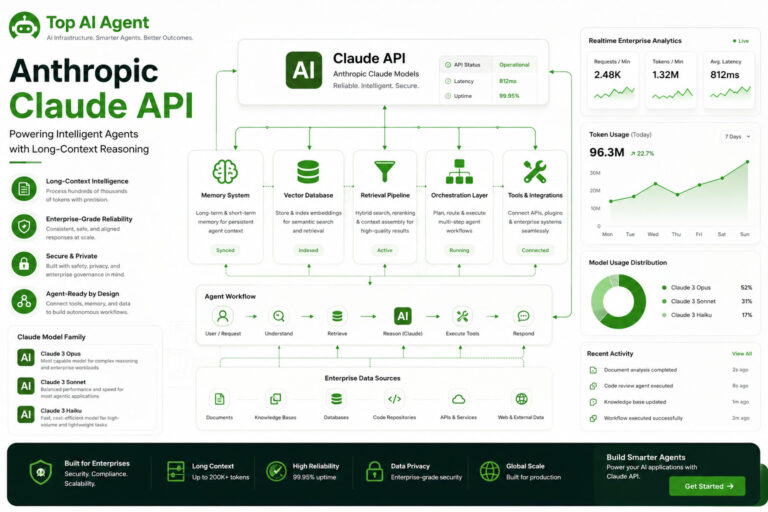

Anthropic Claude API

Anthropic positions Claude as a model family optimized for reasoning, long-context understanding, and enterprise reliability.

Claude models are commonly used in:

- Document analysis

- Research workflows

- Enterprise assistants

- Legal and compliance systems

- Knowledge-heavy AI agents

Key Advantages

- Large context windows

- Strong instruction following

- Reliable conversational behavior

- Useful for long-form workflows

Considerations

- Tool ecosystems are still expanding

- Some developers report slower iteration speed compared to competitors

Google AI Agent Platform

Google continues expanding its AI infrastructure through Gemini models, Vertex AI, and agent orchestration services.

Google’s ecosystem is especially relevant for organizations already using:

- Google Cloud

- Workspace

- BigQuery

- Enterprise data pipelines

Typical Use Cases

- Enterprise search agents

- Internal knowledge systems

- Workflow automation

- Multimodal applications

Benefits

- Strong cloud integration

- Mature enterprise tooling

- Advanced multimodal capabilities

Challenges

- Platform complexity for smaller teams

- Rapid product changes can create confusion

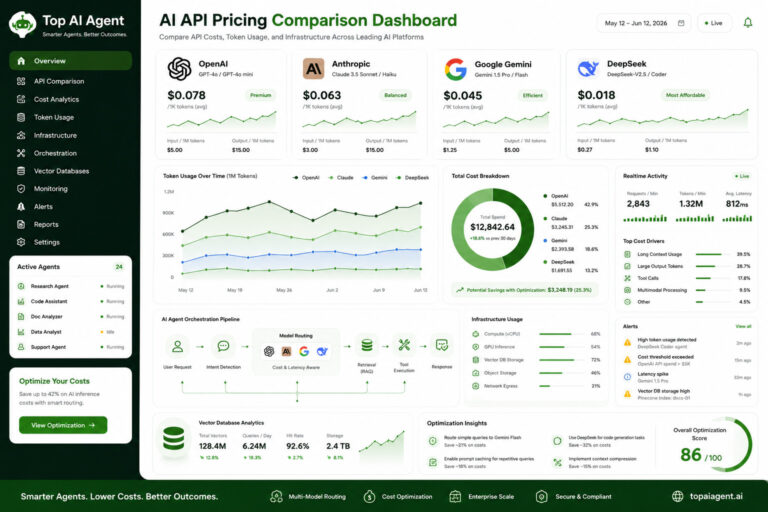

API Pricing Comparison

Pricing remains one of the biggest operational concerns for AI agent systems.

Agent workflows often generate:

- Multiple API calls

- Recursive reasoning loops

- Large context retrieval

- Tool execution chains

This can dramatically increase token usage.

General Pricing Trends

| Provider | Typical Strength | Relative Cost Position |

|—|—|

| OpenAI | General-purpose reasoning | Higher |

| Anthropic | Long-context workflows | Medium to High |

| DeepSeek | Coding and affordable inference | Lower |

| Google | Enterprise integration | Variable |

Real-world costs depend heavily on:

- Context window size

- Input/output token ratios

- Tool calling frequency

- Agent retry loops

- Streaming behavior

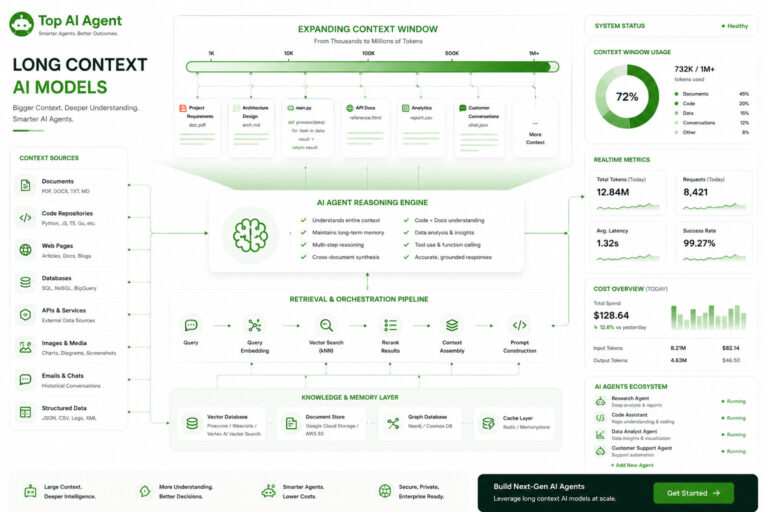

Long Context AI Models

Long-context processing has become a core requirement for AI agents.

Agents increasingly need to:

- Analyze large documents

- Maintain memory across sessions

- Process codebases

- Handle multi-step reasoning chains

Models with extended context windows are particularly useful for:

- Legal analysis

- Software engineering agents

- Research automation

- Enterprise search systems

However, larger context windows also introduce:

- Higher latency

- Increased cost

- Retrieval inefficiencies

- Context dilution problems

This is why many teams combine long-context models with retrieval systems instead of relying entirely on large prompts.

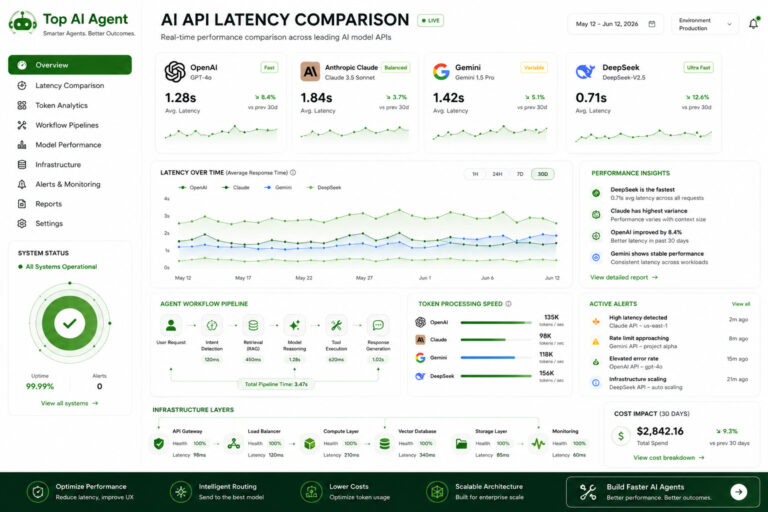

API Latency Comparison

Latency directly impacts user experience and autonomous task execution.

For AI agents, delays compound quickly because workflows may involve:

- Planning

- Tool selection

- API execution

- Retrieval

- Follow-up reasoning

Even small delays can significantly affect performance.

Factors Affecting Latency

| Factor | Impact |

|---|---|

| Model size | Larger models are slower |

| Context length | Longer prompts increase inference time |

| Streaming support | Improves perceived responsiveness |

| Infrastructure region | Geographic distance matters |

| Concurrent agent execution | Can create bottlenecks |

Developers often balance:

- Fast smaller models for orchestration

- Larger reasoning models for critical tasks

AI Infrastructure for Agents

AI agents require more than just model APIs.

A modern agent stack typically includes:

| Infrastructure Layer | Purpose |

|---|---|

| LLM APIs | Core reasoning |

| Vector database | Retrieval and memory |

| Orchestration framework | Workflow coordination |

| Inference layer | Model execution |

| Observability tools | Monitoring and debugging |

| Tool execution layer | External actions |

Popular infrastructure choices include:

- Vector databases

- GPU inference servers

- Retrieval frameworks

- Agent orchestration SDKs

- Cloud deployment systems

Cloud vs Local AI Agents

One of the biggest architectural decisions is whether to run agents in the cloud or locally.

Cloud-Based AI Agents

Advantages

- Easier scaling

- Faster deployment

- Access to frontier models

- Managed infrastructure

Disadvantages

- Ongoing API costs

- Data governance concerns

- Vendor lock-in risks

Self-Hosted AI Agents

Self-hosted agents are becoming more common among:

- Enterprises

- Privacy-focused teams

- Open-source developers

- Edge AI projects

Advantages

- Full control

- Lower long-term inference costs

- Custom fine-tuning

- Data privacy

Challenges

- GPU infrastructure management

- Optimization complexity

- Reliability engineering

- Scaling overhead

Vector Databases for AI Agents

Vector databases are essential for retrieval-augmented generation (RAG) systems.

They allow AI agents to:

- Store embeddings

- Retrieve contextual memory

- Search semantic information

- Access external knowledge efficiently

Common use cases include:

- Enterprise search

- Agent memory systems

- Knowledge retrieval

- Multi-document reasoning

Popular vector database platforms often focus on:

- Fast retrieval speed

- Metadata filtering

- Hybrid search

- Horizontal scalability

AI Inference Optimization

Inference optimization is increasingly important as AI agent usage scales.

Optimization strategies include:

- Quantization

- Model distillation

- Caching

- Dynamic routing

- Speculative decoding

- Token pruning

These techniques help reduce:

- API costs

- GPU usage

- Latency

- Infrastructure overhead

For production agent systems, optimization often matters more than raw benchmark performance.

AI Agent Backend Systems

Backend architecture determines whether AI agents remain reliable under real-world workloads.

Modern agent backend systems typically include:

- Task queues

- Workflow orchestration

- Retry systems

- Memory persistence

- Session management

- Monitoring pipelines

Without strong backend engineering, agents often fail due to:

- Hallucinations

- Infinite loops

- Context overflow

- Tool execution errors

- State inconsistencies

This is why many organizations now treat AI agents as distributed systems problems rather than simple chatbot applications.

How to Choose the Right AI Agent Platform

The best platform depends on your goals.

For Startups

Prioritize:

- Fast iteration

- Strong APIs

- Low infrastructure overhead

For Enterprises

Focus on:

- Security

- Governance

- Long-context reliability

- Compliance support

For Open-Source Projects

Consider:

- Self-hosted models

- Open-weight ecosystems

- Cost-efficient inference

For High-Scale Products

Optimize for:

- Latency

- Routing

- Infrastructure efficiency

- Observability

The Future of AI Agent Platforms

The AI agent ecosystem is shifting from standalone models toward complete operational stacks.

Over the next few years, the market will likely focus on:

- Multi-agent systems

- Persistent memory

- Real-time multimodal reasoning

- Autonomous workflow execution

- Hybrid local-cloud architectures

- Specialized reasoning models

As AI agents become more capable, infrastructure quality may become a larger competitive advantage than raw model intelligence alone.

Key Takeaways

- AI agent systems require much more than a language model API.

- OpenAI, Anthropic, Google, and DeepSeek are among the leading AI agent API providers.

- Long context, latency, pricing, and infrastructure are major platform evaluation factors.

- Vector databases and orchestration systems are essential components of modern AI agents.

- Self-hosted AI agents are becoming more viable as open-weight models improve.

- Cost optimization and inference efficiency are increasingly important for production deployments.

- Reliable AI agents depend heavily on backend engineering and observability systems.

FAQ

What is an AI agent API?

An AI agent API allows developers to connect applications to language models and agent capabilities such as reasoning, tool calling, retrieval, and workflow execution.

Which AI API is best for agents?

The best API depends on the use case. OpenAI is widely used for general-purpose agents, Anthropic is popular for long-context workflows, and DeepSeek is commonly evaluated for cost-efficient coding agents.

What infrastructure do AI agents need?

Most production AI agents require model APIs, vector databases, orchestration frameworks, backend systems, observability tooling, and inference infrastructure.

Are self-hosted AI agents practical?

Yes. Self-hosted AI agents are increasingly practical for enterprises and developers using open-weight models and GPU infrastructure.

Why are vector databases important for AI agents?

Vector databases help agents retrieve contextual information and maintain memory using semantic search and embeddings.

What affects AI API latency?

Latency depends on model size, context length, inference infrastructure, geographic region, and workflow complexity.

How can AI agent costs be reduced?

Costs can be reduced through caching, smaller routing models, inference optimization, retrieval systems, and efficient prompt management.

What is the difference between cloud and local AI agents?

Cloud agents use hosted APIs and managed infrastructure, while local agents run on self-managed hardware or edge systems.