A practical guide to long context AI models, including how they work, real-world use cases, infrastructure tradeoffs, and how developers use them in modern AI agent systems.

As AI systems evolve beyond simple chat interfaces into full-scale autonomous agents, one capability has become increasingly important: long context processing.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

Modern AI agents are expected to:

- Analyze large documents

- Maintain memory across sessions

- Understand entire codebases

- Process multi-step workflows

- Operate within enterprise knowledge systems

This is where long context AI models come in.

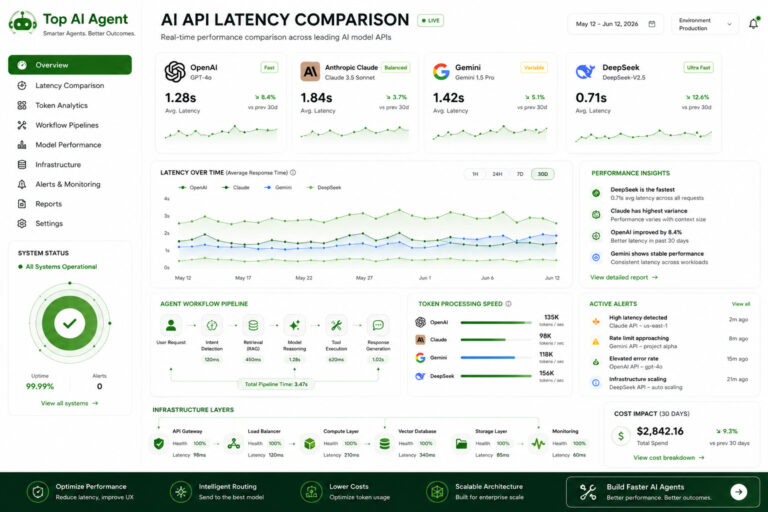

Leading providers like OpenAI, Anthropic, and Google are all investing heavily in expanding context windows, making long-context reasoning a core feature of modern AI infrastructure.

This guide explains what long context AI models are, how they work, their limitations, and how they are used in real-world AI agent systems.

How to Build an AI Agent (Step-by-Step Guide)

AI Agent | Table of Contents

What Are Long Context AI Models?

A long context AI model is a language model capable of processing large amounts of input text (or multimodal data) in a single request.

Context refers to:

- The total input the model can “see” at once

- Including prompts, instructions, documents, and conversation history

Simple Example

| Model Type | Context Capability |

|---|---|

| Standard models | Short conversations |

| Long context models | Entire documents, repositories, workflows |

What Counts as “Long Context”?

While definitions vary, long context typically means:

- Tens of thousands of tokens

- Hundreds of thousands of tokens

- In some cases, over a million tokens

This enables AI systems to process significantly more information in a single reasoning step.

Why Long Context Matters for AI Agents

AI agents rely heavily on context.

Without sufficient context, agents struggle with:

- Memory consistency

- Multi-step reasoning

- Document understanding

- Workflow continuity

Key Benefits

1. Document-Level Understanding

Agents can analyze:

- Legal contracts

- Research papers

- Technical documentation

- Entire PDFs

2. Persistent Memory

Long context allows agents to:

- Maintain conversation history

- Track workflows

- Store contextual decisions

3. Codebase Comprehension

Coding agents can:

- Read large repositories

- Understand dependencies

- Debug across files

4. Multi-Step Reasoning

Agents can:

- Plan tasks

- Track intermediate steps

- Maintain reasoning chains

How Long Context Models Work

Long context models extend traditional transformer architectures to handle larger input windows.

However, processing more data introduces challenges:

- Memory usage

- Computation cost

- Attention scaling

- Latency

Simplified Concept

The model:

- Receives a large input (documents, history, instructions)

- Applies attention mechanisms across tokens

- Generates output based on the entire context

The larger the context, the more complex the computation.

Long Context vs Retrieval (RAG)

Many developers assume long context replaces retrieval systems.

In reality, they are complementary.

Comparison Table

| Approach | Strength | Limitation |

|---|---|---|

| Long Context | Simplicity | Expensive, slower |

| Retrieval (RAG) | Efficient | Requires infrastructure |

| Hybrid Approach | Best balance | More complex setup |

Why Hybrid Systems Win

Most production AI agents use:

- Long context for reasoning

- Retrieval for memory efficiency

This reduces:

- Token usage

- Latency

- Infrastructure cost

Leading Long Context AI Models

Several providers offer long-context capabilities.

OpenAI Models

OpenAI models support extended context for:

- Agents

- coding workflows

- multimodal applications

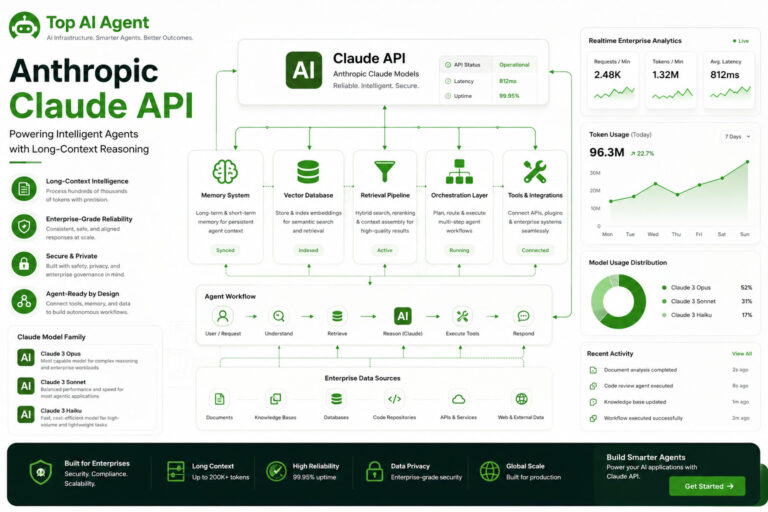

Anthropic Claude

Anthropic is widely known for:

- Very large context windows

- Document-heavy workflows

- Enterprise use cases

Google Gemini

Google focuses on:

- Multimodal long context

- Integration with cloud infrastructure

- Enterprise-scale AI systems

DeepSeek Models

DeepSeek is increasingly evaluated for:

- Cost-efficient reasoning

- Coding workflows

- Long-context experimentation

Real-World Use Cases

Enterprise Knowledge Systems

AI agents analyze:

- Internal documents

- policies

- knowledge bases

Legal & Compliance

Long context enables:

- Contract analysis

- Regulation review

- Multi-document reasoning

Coding Agents

Developers use long context for:

- repository understanding

- debugging across files

- documentation generation

Research Agents

AI systems can:

- analyze multiple sources

- synthesize insights

- maintain long reasoning chains

Customer Support Automation

Agents can:

- access historical conversations

- retrieve account context

- maintain continuity

Limitations of Long Context Models

Despite their advantages, long context models introduce tradeoffs.

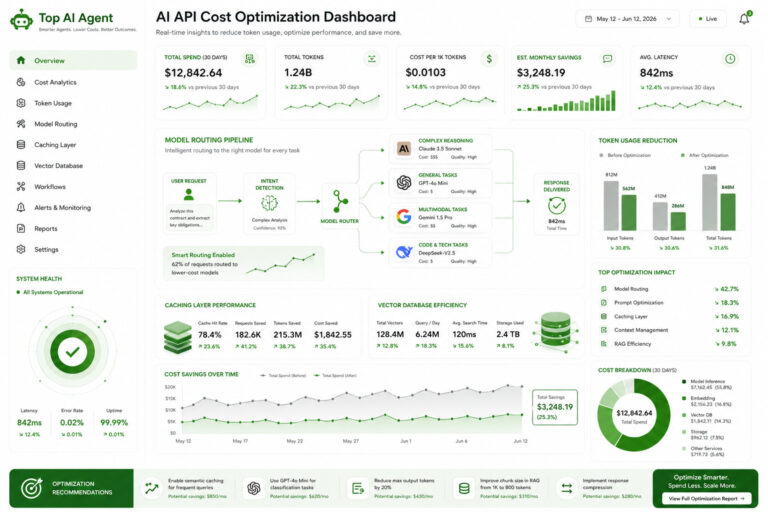

Cost

Large context = higher token usage

This can significantly increase:

- API costs

- infrastructure spending

Latency

Longer inputs require more processing time.

This impacts:

- responsiveness

- user experience

- real-time workflows

Context Dilution

More context does not always mean better results.

Problems include:

- irrelevant information

- weaker attention focus

- degraded reasoning quality

Memory Inefficiency

Sending large amounts of data repeatedly is inefficient.

This is why retrieval systems are still necessary.

Best Practices for Using Long Context

Use Retrieval First

Only send relevant context instead of entire datasets.

Summarize Memory

Compress older conversations into shorter summaries.

Combine Models

Use:

- smaller models for orchestration

- larger models for deep reasoning

Limit Context Size

Avoid sending unnecessary data.

Use Structured Inputs

Organize prompts clearly:

- sections

- headings

- labeled data

Long Context in AI Agent Architecture

Modern AI agents use long context as one component of a larger system.

Typical Architecture

| Layer | Role |

|---|---|

| LLM (long context) | Reasoning |

| Vector database | Memory |

| Orchestration | Workflow control |

| Backend system | State management |

| Monitoring | Observability |

Why This Matters

Long context alone cannot:

- manage workflows

- maintain persistent memory efficiently

- handle real-world systems

It must be combined with infrastructure.

Long Context vs Memory Systems

There’s a common misconception:

“Long context = memory”

This is not entirely true.

Key Difference

| Concept | Description |

|---|---|

| Long Context | Temporary input window |

| Memory Systems | Persistent storage |

Real-World Setup

Agents typically use:

- vector databases for memory

- long context for reasoning

The Future of Long Context AI

Long context models are improving rapidly.

Future trends include:

- larger context windows

- better attention efficiency

- lower cost inference

- improved reasoning accuracy

- multimodal long-context systems

However, infrastructure will remain critical.

The future is not just:

bigger context

but:

smarter context management

Final Thoughts

Long context AI models are a key building block for modern AI agents.

They enable:

- deeper reasoning

- better document understanding

- improved workflow continuity

But they also introduce:

- higher costs

- latency tradeoffs

- architectural complexity

For developers building production AI systems, the most effective approach is not relying solely on long context, but combining it with:

- retrieval systems

- orchestration frameworks

- backend infrastructure

- memory optimization strategies

Understanding how to balance these components is now essential for building scalable AI agents.

Key Takeaways

- Long context AI models allow processing large inputs in a single request.

- They are critical for document analysis, coding agents, and enterprise workflows.

- Larger context windows increase cost and latency.

- Retrieval systems (RAG) are often combined with long context.

- Long context is not the same as persistent memory.

- Hybrid architectures are the most effective approach.

- Infrastructure design matters as much as model capability.

- Efficient context management is becoming a core AI engineering skill.

FAQ

What is a long context AI model?

A long context AI model can process large amounts of text or data in a single input, enabling deeper reasoning and document-level understanding.

How many tokens is considered long context?

Typically tens of thousands to hundreds of thousands of tokens, depending on the model.

Do long context models replace vector databases?

No. Most systems use both long context and retrieval systems together.

Why are long context models expensive?

They process more tokens, which increases computational cost and API usage.

Are long context models slower?

Yes. Larger inputs generally result in higher latency.

What are the best use cases for long context?

Document analysis, coding agents, research systems, and enterprise AI workflows.

How do developers optimize long context usage?

By using retrieval systems, summarization, caching, and selective memory management.

Is long context the future of AI?

It’s an important part, but efficiency and infrastructure design will matter just as much.