A practical guide to Google’s AI agent ecosystem, including Gemini models, Vertex AI, orchestration systems, enterprise automation, multimodal workflows, and scalable AI infrastructure.

As AI agents evolve from experimental assistants into production-grade enterprise systems, organizations are increasingly evaluating platforms that combine advanced language models with scalable cloud infrastructure, orchestration tooling, retrieval systems, and enterprise integrations.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

One of the most significant ecosystems in this space is Google and its expanding AI platform stack.

Google’s AI agent ecosystem combines:

- Gemini models

- Vertex AI

- AI infrastructure services

- Retrieval systems

- Cloud orchestration

- Multimodal AI capabilities

- Enterprise integrations

Together, these tools form a broader Google AI Agent Platform that developers increasingly use to build scalable autonomous AI systems.

This guide explores how Google’s AI platform supports AI agents, including infrastructure architecture, multimodal workflows, enterprise deployment, pricing considerations, latency tradeoffs, and practical implementation strategies.

How to Build an AI Agent (Step-by-Step Guide)

AI Agent | Table of Contents

What Is the Google AI Agent Platform?

Google’s AI agent ecosystem is built around several core technologies:

| Platform Component | Purpose |

|---|---|

| Gemini models | Reasoning and multimodal AI |

| Vertex AI | AI development and deployment |

| Google Cloud | Infrastructure and scalability |

| Retrieval systems | Knowledge and memory workflows |

| Workspace integrations | Productivity automation |

| Orchestration services | Workflow management |

Together, these systems allow developers to build:

- Enterprise AI assistants

- Retrieval-based knowledge agents

- Workflow automation systems

- Multimodal AI applications

- Autonomous research agents

- Customer support agents

Unlike standalone model APIs, Google increasingly positions its ecosystem as a full-stack AI infrastructure platform.

Why Developers Use Google for AI Agents

AI agents require more than language generation.

Production systems often depend on:

- Scalable infrastructure

- Secure deployment

- Retrieval systems

- Enterprise integrations

- Monitoring and governance

- Workflow orchestration

Google’s ecosystem is especially attractive for organizations already using:

- Google Cloud

- Workspace

- BigQuery

- Kubernetes

- Enterprise analytics systems

For many enterprises, the platform’s infrastructure maturity matters as much as model quality.

Gemini Models for AI Agents

Google’s Gemini models serve as the reasoning layer behind many AI agent workflows.

Gemini models are commonly used for:

- Conversational AI

- Multimodal reasoning

- Research workflows

- Document analysis

- AI automation systems

- Enterprise assistants

Multimodal AI Capabilities

One of Gemini’s major differentiators is multimodal processing.

AI agents increasingly need to work with:

- Text

- Images

- Video

- Audio

- Documents

- Structured data

Gemini models are designed to support workflows involving multiple content types simultaneously.

Example Use Cases

| Workflow | Multimodal Capability |

|---|---|

| Visual document analysis | Text + image understanding |

| Meeting assistants | Audio + transcription |

| Enterprise search | Documents + structured data |

| Workflow automation | Cross-format reasoning |

| Research systems | Multi-source analysis |

Multimodal reasoning is becoming increasingly important for enterprise AI systems.

Vertex AI for AI Agent Development

Vertex AI acts as Google’s primary orchestration and deployment environment for AI applications.

Developers commonly use Vertex AI for:

- Model deployment

- Workflow management

- AI orchestration

- Agent pipelines

- Evaluation systems

- Monitoring infrastructure

Why Vertex AI Matters for Agents

AI agents require:

- State management

- Scalable inference

- Backend coordination

- Monitoring

- Retrieval integration

Vertex AI helps manage these operational layers inside Google Cloud infrastructure.

Common Vertex AI Use Cases

| Use Case | Why Teams Use Vertex AI |

|---|---|

| Enterprise assistants | Scalable deployment |

| Retrieval systems | Integration with cloud storage |

| AI automation | Workflow orchestration |

| Data-centric AI | BigQuery integration |

| Research agents | Infrastructure scalability |

For enterprises already operating within Google Cloud, Vertex AI can reduce infrastructure complexity.

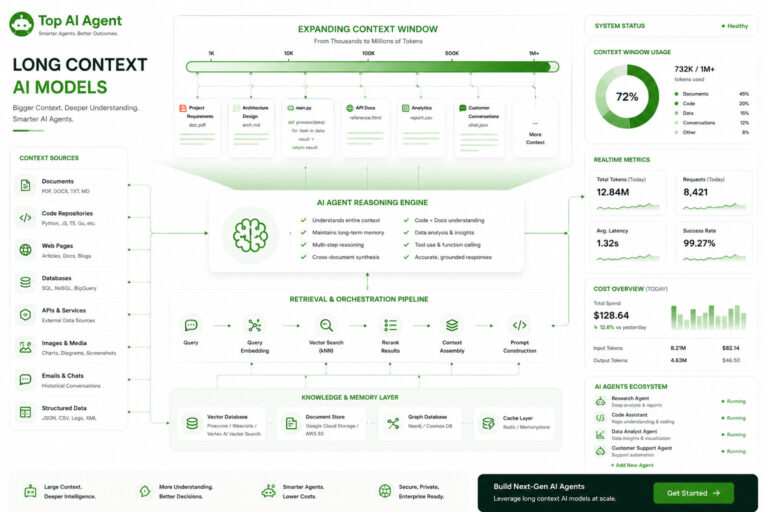

Google AI Agent Infrastructure

Google’s ecosystem is particularly strong in cloud infrastructure.

AI agents built on Google Cloud commonly integrate:

- Kubernetes

- BigQuery

- Cloud Functions

- Pub/Sub

- Vector retrieval systems

- Identity and access management

This allows organizations to create highly scalable AI architectures.

Typical Google AI Agent Architecture

| Layer | Purpose |

|---|---|

| Gemini models | Reasoning engine |

| Vertex AI | Orchestration and deployment |

| Vector database | Retrieval and memory |

| Google Cloud | Infrastructure and scaling |

| Backend services | Workflow coordination |

| Monitoring systems | Observability and logging |

This modular approach mirrors broader trends in AI infrastructure engineering.

Retrieval-Augmented Generation (RAG) on Google Cloud

Most enterprise AI agents now rely heavily on retrieval systems.

Retrieval-augmented generation (RAG) allows AI agents to:

- Search external knowledge

- Inject relevant context dynamically

- Reduce hallucinations

- Improve factual grounding

Google’s infrastructure stack supports:

- Document indexing

- Semantic retrieval

- Enterprise search

- Cloud storage integration

This is especially useful for:

- Internal enterprise assistants

- Organizational search systems

- Research automation

- Knowledge management agents

Google Workspace AI Automation

One of Google’s advantages is deep integration with productivity tools.

AI agents can potentially interact with:

- Gmail

- Docs

- Sheets

- Calendar

- Drive

- Meet

This creates opportunities for:

- Workflow automation

- Scheduling systems

- Enterprise copilots

- Internal operational agents

For organizations already standardized on Workspace, this can significantly simplify deployment.

Google AI Agent Platform vs OpenAI

OpenAI remains one of the dominant AI ecosystems, but Google offers several advantages in infrastructure-centric deployments.

Google Strengths

| Area | Advantage |

|---|---|

| Cloud integration | Deep infrastructure ecosystem |

| Enterprise tooling | Mature operational stack |

| Multimodal workflows | Strong cross-format AI |

| Data infrastructure | BigQuery and analytics integration |

OpenAI Strengths

| Area | Advantage |

|---|---|

| Simpler onboarding | Easier rapid prototyping |

| Developer ecosystem | Broad API adoption |

| Tooling maturity | Extensive integrations |

| Community support | Larger AI startup ecosystem |

Many enterprises now use hybrid multi-provider AI architectures.

Google AI Agent Platform vs Anthropic Claude

Anthropic is often preferred for:

- Long-context workflows

- Research-heavy systems

- Document analysis

Google’s ecosystem is frequently selected for:

- Cloud-native enterprise deployment

- Infrastructure scalability

- Workspace integrations

- Multimodal AI applications

The choice depends heavily on organizational priorities.

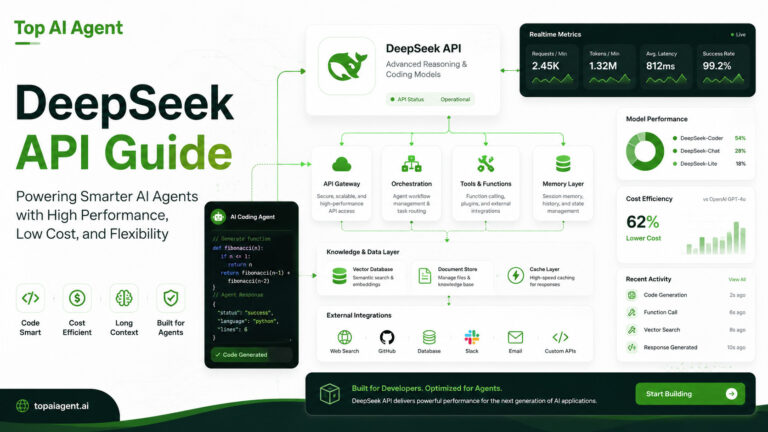

Google AI Agent Platform vs DeepSeek

DeepSeek is commonly evaluated for:

- Lower-cost inference

- Coding workflows

- Open infrastructure ecosystems

Google focuses more heavily on:

- Enterprise cloud deployment

- Managed infrastructure

- Scalable orchestration systems

These platforms often serve different operational priorities.

AI Agent Latency and Performance Considerations

Latency becomes increasingly important as AI agents execute multi-step workflows.

A typical agent pipeline may involve:

- Retrieval

- Planning

- Tool execution

- Reasoning

- Additional orchestration

- Response generation

Even small delays can compound quickly.

Factors Affecting Google AI Agent Performance

| Factor | Impact |

|---|---|

| Model complexity | Larger models increase latency |

| Context size | Long prompts require more processing |

| Cloud region | Geographic distance affects speed |

| Retrieval workflows | Additional orchestration overhead |

| Concurrent workloads | Parallel agents increase demand |

Infrastructure optimization becomes essential for production-scale AI systems.

Security and Governance

Large organizations increasingly prioritize:

- Data governance

- Compliance

- Identity management

- Access controls

- Auditability

Google’s cloud ecosystem provides enterprise-grade governance tooling that is particularly important in regulated industries.

This is one reason many enterprises evaluate Google for:

- Internal AI assistants

- Healthcare workflows

- Financial automation

- Enterprise search systems

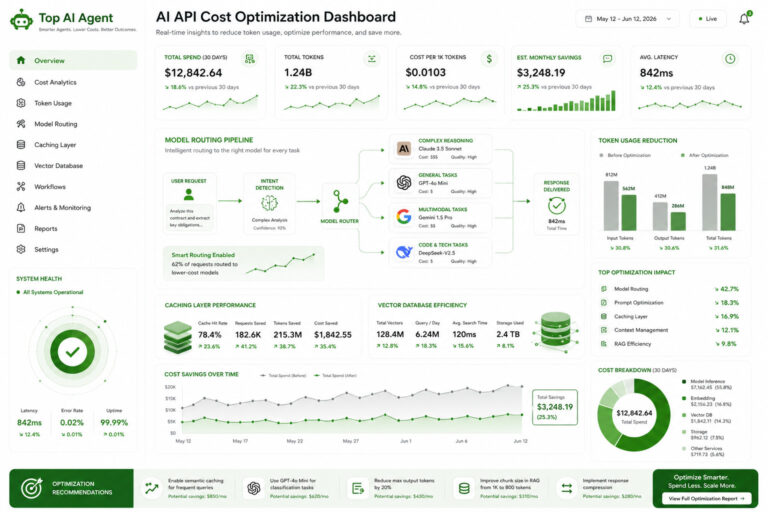

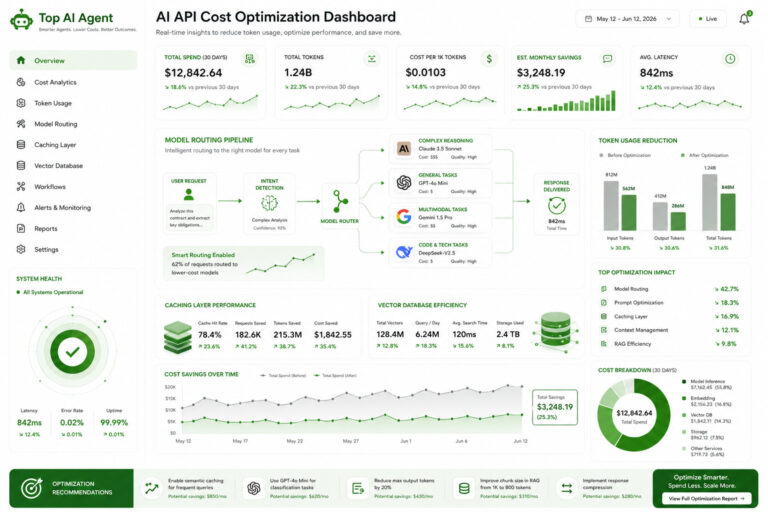

Cost Considerations for Google AI Agents

AI agents can generate substantial infrastructure costs because they involve:

- Long-context prompts

- Retrieval systems

- Continuous inference

- Tool orchestration

- Multi-agent coordination

Major Cost Factors

| Factor | Cost Impact |

|---|---|

| Model usage | Token-based inference costs |

| Retrieval systems | Vector storage and search |

| Cloud infrastructure | Compute and networking |

| Persistent memory | Long-term storage overhead |

| Monitoring systems | Observability tooling |

Organizations increasingly optimize costs using:

- Model routing

- Retrieval systems

- Prompt compression

- Caching

- Hybrid deployments

Challenges and Limitations

Despite its strengths, Google’s AI ecosystem also presents challenges.

Platform Complexity

Google Cloud infrastructure can be overwhelming for smaller teams and independent developers.

Rapid Product Changes

The AI ecosystem evolves quickly, and product naming, APIs, and workflows can change frequently.

Operational Overhead

Production AI systems still require:

- Backend engineering

- Monitoring

- Validation layers

- Workflow orchestration

No platform fully eliminates infrastructure complexity.

Vendor Dependency

Organizations must consider:

- Cloud lock-in

- Long-term operational costs

- Infrastructure portability

when building AI systems deeply tied to one cloud ecosystem.

Best Use Cases for Google AI Agent Platform

Google’s ecosystem is particularly well suited for:

Enterprise AI Assistants

Internal knowledge systems and productivity copilots.

Multimodal AI Workflows

Applications combining text, audio, image, and document analysis.

Large-Scale Cloud AI Systems

Distributed AI infrastructure deployed across enterprise environments.

Research and Analytics Agents

AI systems integrated with large-scale data pipelines.

Workspace Automation

AI agents operating inside productivity workflows.

Is Google a Good Platform for AI Agents?

For many organizations, Google offers one of the strongest infrastructure ecosystems for enterprise AI deployment.

Its strengths include:

- Cloud scalability

- Enterprise integrations

- Multimodal AI

- Data infrastructure

- Workflow orchestration

However, the ideal platform depends on:

- Technical expertise

- Infrastructure strategy

- Budget

- Compliance requirements

- Workflow complexity

Increasingly, enterprises combine multiple AI providers into unified orchestration systems rather than depending entirely on a single ecosystem.

Final Thoughts

The Google AI Agent Platform is evolving into a comprehensive infrastructure stack for enterprise AI systems.

By combining Gemini models, Vertex AI, cloud infrastructure, retrieval systems, and enterprise integrations, Google is positioning itself as a major player in production AI agent deployment.

As AI agents become more autonomous and infrastructure-intensive, scalable orchestration, monitoring, retrieval, and governance systems will become just as important as model intelligence itself.

For organizations building enterprise AI systems in 2026, Google’s ecosystem is now a major platform worth evaluating.

Key Takeaways

- Google’s AI ecosystem combines Gemini, Vertex AI, and Google Cloud infrastructure.

- The platform is well suited for enterprise AI agents and multimodal workflows.

- Retrieval-augmented generation (RAG) is central to many Google AI deployments.

- Vertex AI helps manage orchestration, deployment, and monitoring.

- Workspace integrations create strong enterprise automation opportunities.

- Infrastructure scalability is one of Google’s major strengths.

- AI agents require orchestration, memory systems, retrieval, and backend coordination.

- Hybrid multi-model AI architectures are becoming increasingly common.

FAQ

What is the Google AI Agent Platform?

Google’s AI agent platform combines Gemini models, Vertex AI, cloud infrastructure, retrieval systems, and enterprise integrations for building AI agents.

What is Vertex AI used for?

Vertex AI is Google’s AI development and deployment platform used for orchestration, model management, monitoring, and AI infrastructure workflows.

Are Gemini models good for AI agents?

Yes. Gemini models are commonly used for multimodal AI workflows, enterprise assistants, and retrieval-based AI systems.

Does Google support multimodal AI agents?

Yes. Google’s AI ecosystem supports workflows involving text, images, audio, video, and documents.

What infrastructure is needed for Google AI agents?

Production systems typically require retrieval systems, orchestration layers, backend services, monitoring tools, and scalable cloud infrastructure.

How does Google compare to OpenAI for AI agents?

Google is often stronger in enterprise cloud infrastructure and multimodal workflows, while OpenAI is known for its developer ecosystem and rapid prototyping simplicity.

Can Google AI agents integrate with Workspace?

Yes. AI agents can potentially interact with Gmail, Docs, Sheets, Calendar, and other Workspace services.

Are Google AI platforms suitable for enterprises?

Yes. Google’s infrastructure, governance tooling, and cloud ecosystem make it particularly attractive for enterprise AI deployments.