A practical breakdown of AI API pricing, token costs, infrastructure tradeoffs, and optimization strategies for developers building AI agents and production AI systems.

Best AI Agent APIs & Platforms: A Practical Guide for Building AI Agents in 2026

As AI agents become more sophisticated, API pricing has evolved from a minor development concern into a major infrastructure decision.

Modern AI systems often involve:

- Long-context prompts

- Retrieval pipelines

- Multi-agent workflows

- Tool calling

- Continuous reasoning loops

- Multimodal processing

This dramatically increases token consumption and operational costs.

For startups, enterprises, and independent developers alike, understanding AI API pricing is now essential for building scalable AI agents.

This guide compares the major AI API providers in 2026, including:

- OpenAI

- Anthropic

- DeepSeek

and explains how pricing impacts real-world AI infrastructure decisions.

How to Build an AI Agent (Step-by-Step Guide)

AI Agent | Table of Contents

Why AI API Pricing Matters More for Agents

Traditional chatbot applications usually involve:

- One user prompt

- One model response

AI agents are very different.

A single agent workflow may include:

- Planning

- Retrieval

- Tool execution

- Additional reasoning

- Multi-step orchestration

- Follow-up actions

This can generate dozens or even hundreds of API calls during a single workflow.

As a result, token usage scales rapidly.

Understanding AI API Pricing Models

Most AI APIs charge based on:

- Input tokens

- Output tokens

- Context size

- Image or multimodal processing

- Tool usage

- Realtime inference

What Are Tokens?

Tokens are chunks of text processed by AI models.

As a rough estimate:

- 1,000 tokens ≈ 750 words

Both:

- prompts

- responses

consume tokens.

Long-context AI agents can process millions of tokens daily in production environments.

Major Factors That Affect AI API Costs

| Factor | Cost Impact |

|---|---|

| Context window size | Larger prompts increase spending |

| Output length | Long reasoning chains cost more |

| Tool calling | Additional orchestration overhead |

| Agent loops | Recursive workflows multiply costs |

| Multimodal processing | Images/audio increase pricing |

| Retrieval systems | Additional context injection |

| Concurrent agents | Parallel workloads increase usage |

For production AI systems, infrastructure optimization often matters more than raw model pricing.

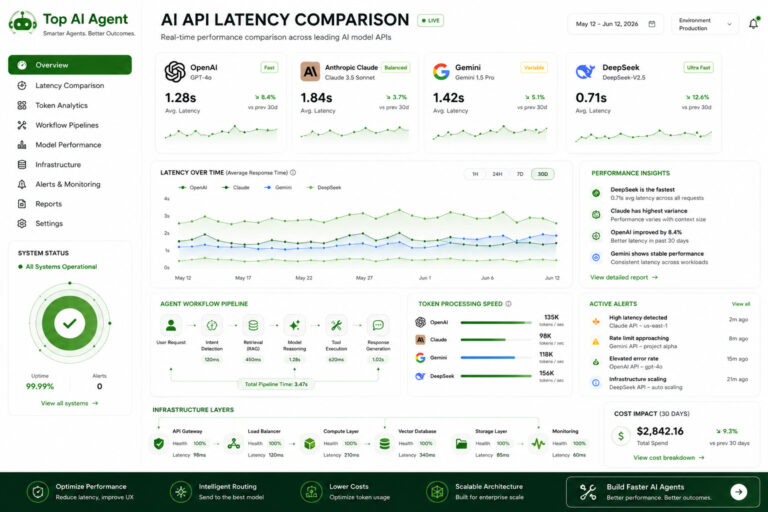

OpenAI API Pricing Overview

OpenAI remains one of the most widely used AI ecosystems.

Its APIs are commonly used for:

- AI agents

- Coding assistants

- Enterprise copilots

- Retrieval systems

- Realtime AI applications

OpenAI Pricing Characteristics

| Area | Pricing Trend |

|---|---|

| Frontier reasoning models | Higher cost |

| Lightweight models | More affordable |

| Multimodal workflows | Variable pricing |

| Realtime APIs | Additional infrastructure costs |

OpenAI Cost Considerations

OpenAI can become expensive in:

- Large-context workflows

- Multi-agent systems

- High-frequency automation

- Long-running enterprise assistants

However, many teams still choose OpenAI because of:

- Mature tooling

- Reliability

- Ecosystem integrations

- Developer support

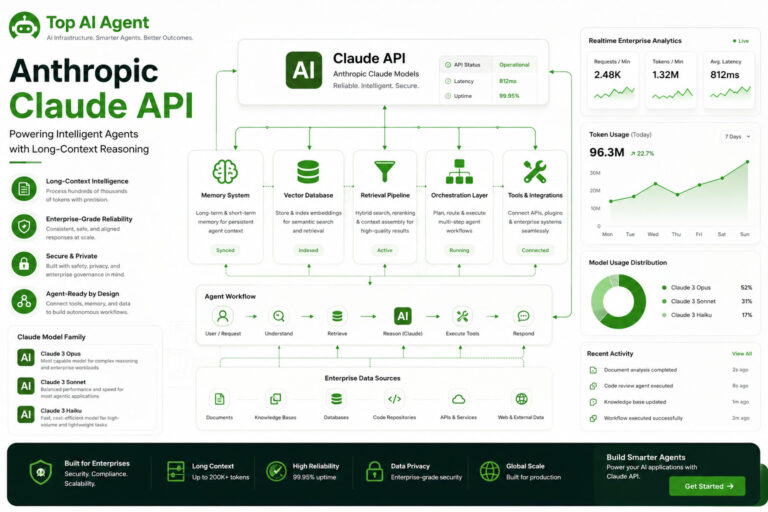

Anthropic Claude API Pricing

Anthropic is widely used for:

- Long-context reasoning

- Enterprise assistants

- Research workflows

- Document-heavy systems

Claude Pricing Characteristics

| Area | Pricing Trend |

|---|---|

| Long-context processing | Higher usage costs |

| Enterprise workflows | Premium positioning |

| Large document analysis | Resource intensive |

Claude pricing is heavily affected by:

- context size

- document length

- retrieval workflows

Long-context AI agents can generate substantial token consumption.

Google Gemini API Pricing

Google positions Gemini and Vertex AI as part of a broader cloud infrastructure ecosystem.

Pricing often depends on:

- Model tier

- Cloud infrastructure usage

- Storage

- Retrieval systems

- Multimodal workflows

Google Pricing Characteristics

| Area | Pricing Trend |

|---|---|

| Enterprise infrastructure | Usage-based scaling |

| Cloud integrations | Additional platform costs |

| Multimodal AI | Variable pricing structure |

| Large deployments | Potential enterprise discounts |

Organizations already using Google Cloud may benefit from tighter infrastructure integration.

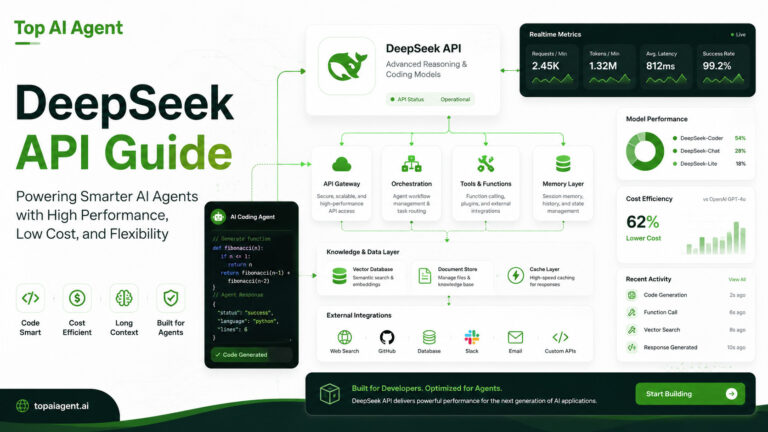

DeepSeek API Pricing

DeepSeek has become increasingly popular because of its lower-cost inference.

Many developers evaluate DeepSeek for:

- Coding workflows

- AI automation

- Experimental agents

- Budget-sensitive deployments

DeepSeek Pricing Characteristics

| Area | Pricing Trend |

|---|---|

| Coding inference | Lower cost |

| General reasoning | Competitive pricing |

| Long workflows | More cost-efficient scaling |

This makes DeepSeek attractive for:

- startups

- independent developers

- experimental AI systems

- self-hosted workflows

AI API Pricing Comparison Table

General Platform Comparison

| Provider | Typical Cost Position | Best Known For | Common Tradeoff |

|---|---|---|---|

| OpenAI | Higher | Ecosystem maturity | Scaling cost |

| Anthropic Claude | Medium to High | Long-context reasoning | Expensive large workflows |

| Google Gemini | Variable | Enterprise infrastructure | Platform complexity |

| DeepSeek | Lower | Coding and affordable inference | Smaller ecosystem |

The Hidden Costs of AI Agents

Many developers underestimate the true cost of AI agents.

API pricing is only one part of the equation.

Production systems also require:

- Vector databases

- Monitoring infrastructure

- Backend orchestration

- Logging

- Queue systems

- Cloud compute

- Storage

Typical Infrastructure Layers

| Infrastructure Layer | Additional Cost Area |

|---|---|

| Vector retrieval | Embedding storage/search |

| Workflow orchestration | Compute overhead |

| Realtime systems | Streaming infrastructure |

| Monitoring | Observability tooling |

| Persistent memory | Database/storage usage |

This is why infrastructure architecture matters as much as model selection.

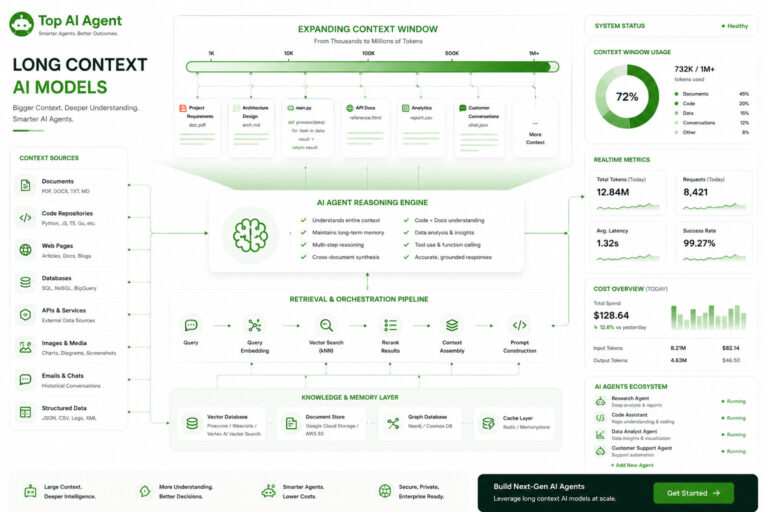

Long Context Models and Cost Scaling

Long-context AI models are powerful but expensive.

Large context windows allow AI agents to:

- Analyze documents

- Process repositories

- Maintain memory

- Perform long-form reasoning

However, larger prompts dramatically increase:

- inference costs

- latency

- infrastructure load

Why Context Size Matters

| Context Usage | Infrastructure Impact |

|---|---|

| Large prompts | Higher token consumption |

| Extended memory | Increased retrieval overhead |

| Persistent conversations | More storage and inference costs |

Most production systems now combine:

- vector retrieval

- context compression

- memory summarization

instead of relying entirely on massive prompts.

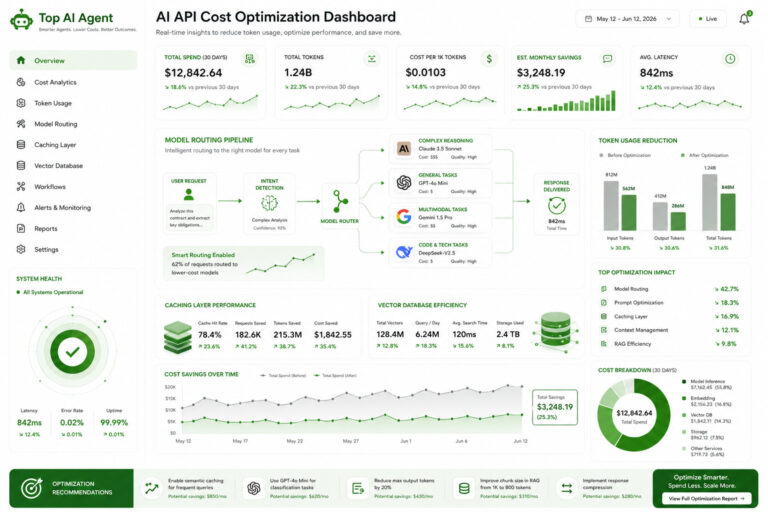

How Companies Reduce AI API Costs

Cost optimization is becoming one of the most important AI engineering disciplines.

Common AI Cost Optimization Strategies

Retrieval-Augmented Generation (RAG)

Instead of sending large knowledge bases directly into prompts, systems retrieve only relevant information dynamically.

This reduces:

- token usage

- prompt size

- inference costs

Smaller Routing Models

Many systems use:

- smaller models for orchestration

- larger models for complex reasoning

This hybrid architecture lowers operational spending.

Prompt Compression

Reducing unnecessary context can dramatically lower costs.

Caching

Frequently reused prompts and outputs are stored to avoid repeated API calls.

Selective Memory Systems

Agents retain only important long-term information instead of preserving every interaction.

Cloud vs Self-Hosted Cost Models

Some organizations now evaluate:

- hosted APIs

- self-hosted open-weight models

- hybrid architectures

Cloud AI APIs

Advantages

- Easy scaling

- Managed infrastructure

- Access to frontier models

Disadvantages

- Ongoing token costs

- Vendor lock-in

- Expensive large-scale inference

Self-Hosted AI Systems

Advantages

- Lower long-term inference cost

- Greater control

- Infrastructure customization

Challenges

- GPU infrastructure

- DevOps complexity

- Optimization engineering

- Monitoring overhead

For many enterprises, hybrid infrastructure is becoming increasingly common.

Which AI API Is Most Cost Effective?

The answer depends heavily on the workload.

For Startups

Often prioritize:

- low operational cost

- rapid experimentation

- simple integration

DeepSeek and lightweight OpenAI models are commonly evaluated.

For Enterprises

Focus on:

- governance

- reliability

- infrastructure integration

- scalability

Google and Anthropic ecosystems are frequently considered.

For Coding Agents

Developers often evaluate:

- DeepSeek

- OpenAI

- hybrid coding systems

For Long-Context Research Systems

Claude models are commonly used despite higher context costs because of strong document handling.

The Future of AI API Pricing

AI pricing models are evolving rapidly.

The market is increasingly shifting toward:

- model specialization

- infrastructure optimization

- hybrid deployments

- agent-based workflows

- retrieval-centric architectures

Over time, the competitive advantage may shift from:

“best model”

to:

“most efficient operational stack”

As AI agents become infrastructure-heavy systems, pricing efficiency will become one of the most important engineering decisions organizations make.

Key Takeaways

- AI agent systems generate significantly higher API costs than traditional chatbots.

- OpenAI, Anthropic, Google, and DeepSeek all have different pricing strengths and tradeoffs.

- Long-context workflows can dramatically increase token consumption.

- Retrieval systems help reduce inference costs and improve efficiency.

- Infrastructure costs extend beyond model APIs alone.

- Cost optimization is becoming a core AI engineering discipline.

- Hybrid multi-model architectures are increasingly common.

- Self-hosted AI systems are becoming more viable for large-scale deployments.

FAQ

Why are AI agents more expensive than chatbots?

AI agents often perform multi-step reasoning, retrieval, tool execution, and orchestration workflows that generate far more API calls and token usage.

Which AI API is the cheapest?

DeepSeek is commonly evaluated for lower-cost inference, especially for coding and automation workflows.

Is OpenAI expensive for AI agents?

OpenAI can become expensive at scale, particularly for long-context workflows and multi-agent systems.

Why do long-context models cost more?

Larger prompts require more computation, memory, and inference resources, increasing token usage and latency.

How can AI API costs be reduced?

Common strategies include retrieval systems, caching, prompt compression, smaller routing models, and selective memory management.

Are self-hosted AI systems cheaper?

They can reduce long-term inference costs but require substantial GPU infrastructure and operational engineering.

What infrastructure costs exist beyond APIs?

Production systems often require vector databases, orchestration systems, monitoring tools, storage, and backend services.

Which AI API is best for enterprise workflows?

That depends on infrastructure strategy, governance requirements, workflow complexity, and deployment scale.